|

IF YOU ARE HAVING A PROBLEM

- Take a look at the logs in

C:\Program Files\CodeProject\AI\logs and see if there's anything in there that screams 'something broke'.

- Check the FAQs in the CodeProject.AI Server documentation

- Make sure you've tested the server using the Explorer (blue link, top middle of the dashboard) to ensure it's a server issue rather than something else such as Blue Iris or another app using CodeProject.AI server.

- If there's no obvious answer, then copy and paste into a message the contents of the System Info tab, describe what you are doing, and what you see, and what you would expect.

Always include a copy and paste from the System Info tab of the dashboard. It gives us a ton of info on your setup. If an individual module is failing, click the 'Info' button to the right of the module's name in the status list and copy and paste that info too.

How to reinstall a module

Option 1. Go to the install modules tab on the dashboard and try re-installing the package. Make sure you have enough disk space and a reliable internet connection.

Option 2: (Option 1 with a vengeance): If that fails, head to the module's folder ([app root]\modules\module-id), open a terminal in admin mode, and run ..\..\setup. This will force a manual reinstall using the install script.

Docker: In Docker you will need to open a terminal into the docker container. You can do this using Docker Desktop, or Visual Studio Code with the Docker remote extension, or on the command using using docker attach. Then do a cd /app/modules/module-id where module-id is the id of the module you need to resinstall. Next, run sudo bash ../../setup.sh --verbosity info to force a manual reinstall of that module. (Set verbosity as quiet, info or loud to get less or more info)

cheers

Chris Maunder

modified 18-Feb-24 15:48pm.

|

|

|

|

|

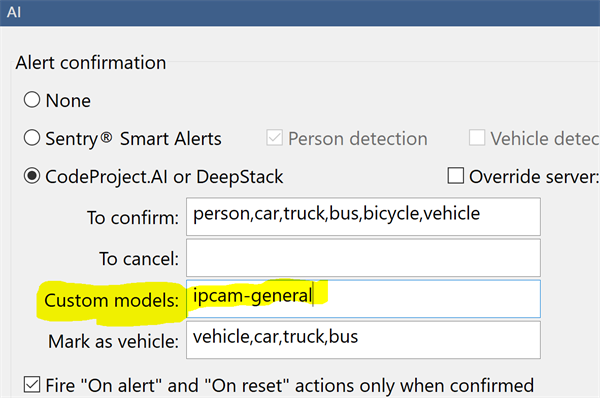

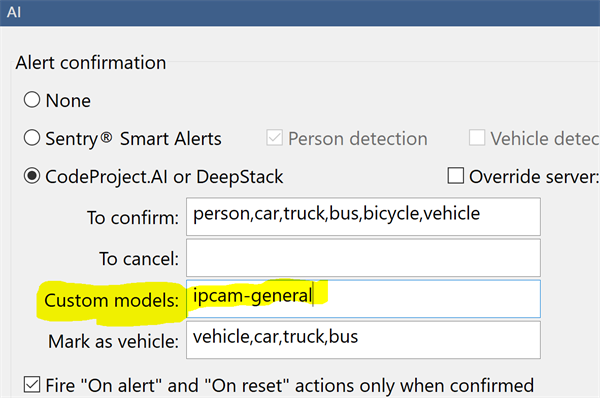

If you are a Blue Iris user and you are using custom models, then you would notice that the option, in Blue Iris, to set the custom model location is greyed out. This is because Blue Iris does not currently make changes to CodeProject.AI Server's settings. It can be done by manually starting CodeProject.AI with command line parameters (not a great solution), or editing the module settings files (a little messy), or setting system-wide environment variables (way easier). For version 1.6 we added an API to allow any app to change our settings programmatically, and we take care of stopping/restarting things and persisting the changes.

So: Blue Iris doesn't currently change CodeProject.AI Server's settings, so it doesn't provide you a way to change the custom model folder location from within Blue Iris.

Blue Iris will still use the contents of this folder to determine the calls it makes. If you don't specify a model to use in the Custom Models textbox, then Blue Iris will use all models in the custom models folder that it knows about.

Here we've specified a specific model to use. The Blue Iris help file explains more about how this works, including inclusive and exclusive filters on the models it finds.

CodeProject.AI Server doesn't know about Blue Iris' folder, so it can't tell what models it may be expected to use, nor can it tell Blue Iris about what models CodeProject.AI server has available. Our API allows Blue Iris to get a list of the AI models installed with CodeProject.AI Server, and also to set the folder where these models reside. But Blue Iris doesn't, yet, use that API.

So we do a hack.

At install time we sniff the registry to find where Blue Iris thinks the custom models should be. We then make empty copies of the models that we have, and copy them into that folder. If the folder doesn't exist (eg you were using C:\Program Files\CodeProject\AI\AnalysisLayer\CustomObjectDetection\assets, which no longer exists) then we create that folder, and then copy over the empty files.

When Blue Iris looks in that folder to decide what custom calls it can make, it sees the models, notes their names, and uses those names in the calls. CodeProject.AI Server has those models, so when the calls come through we can process them.

Blue Iris doesn't use the models. It uses the list of model names.

If you have your own models in the Blue Iris folder

You will need to copy them to the CodeProject.AI server's custom model folder (by default this is C:\Program Files\CodeProject\AI\AnalysisLayer\ObjectDetectionYolo\custom-models)

If you've modified the registry and have your own custom models

If you were using a folder in C:\Program Files\CodeProject\AI\AnalysisLayer\CustomObjectDetection\ (which no longer existed after the upgrade, but was recreated by our hack) you'll need to re-copy your custom model into that folder.

The simplest solutions are:

- Modify the registry (Computer\HKEY_LOCAL_MACHINE\SOFTWARE\Perspective Software\Blue Iris\Options\AI, key 'deepstack_custompath') so Blue Iris looks in

C:\Program Files\CodeProject\AI\AnalysisLayer\ObjectDetectionYolo\custom-models for custom models, and copy your models into there.

or

- Modify

C:\Program Files\CodeProject\AI\AnalysisLayer\ObjectDetectionYolo\modulesettings.json file and set CUSTOM_MODELS_DIR to be whatever Blue Iris thinks the custom model folder is.

cheers

Chris Maunder

|

|

|

|

|

I searched around and couldn't find anything. I just upgraded to 2.6.2, running in Docker on an Ubuntu VM (Proxmox). Have been running 2.1.1 successfully for quite a while now. I'm using with BlueIris.

Detection seems to be working, but I'm getting these spurious errors about "catdog_m.pt" in the logs that have me a bit perplexed. I've searched around and can't find anything. Any ideas? I ask because I am hoping to get better detection of my dog, who is a fairly large breed and is often identified as a "person" on my backyard cameras. Thanks in advance!

17:10:29:Object Detection (YOLOv5 6.2): /app/preinstalled-modules/ObjectDetectionYOLOv5-6.2/custom-models/catdog_m.pt does not exist

17:10:29:Object Detection (YOLOv5 6.2): Unable to create YOLO detector for model catdog_m

17:10:29:Object Detection (YOLOv5 6.2): Detecting using catdog_m

17:10:29:Object Detection (YOLOv5 6.2): /app/preinstalled-modules/ObjectDetectionYOLOv5-6.2/custom-models/catdog_m.pt does not exist

17:10:29:Response rec'd from Object Detection (YOLOv5 6.2) command 'custom' (...7ae351)

17:10:29:Object Detection (YOLOv5 6.2): Unable to create YOLO detector for model catdog_m

|

|

|

|

|

I'm using ipcam-general with Agent DVR but CPAI doesn't seem to load the custom model automatically after a restart.

Logs say:

SetFailed: Message from CPAI: No custom models found at CoreLogic.AI.ObjectRecognizer.Detect()<br />

So Object recognition doesn't work until I click a "Get Models" button on Agent DVR (not sure what this actually triggers).

https://old.reddit.com/r/ispyconnect/comments/1cxj135/settings_bug_after_restart/[^]

|

|

|

|

|

So, I am trying to get Blue Iris to alert only on certain vehicles. I live in a cul-de-sac, so people make u-turns all the time. What I would like, is for only certain vehicles to confirm the alerts. I figure, I will have to train a model on which vehicles I would like to confirm with, but also, if I could just do certain colors, that would send me in the right direction for now. Is there a way to confirm an alert if say a red car drives by?

I realize you will probably ask, well, don't you want the strangers cars to alert you as well? Not so much. This is more for like automation confirmation and back-up than anything. But also, with this, I can set a rule to have certain alerts not trigger when my moms silver car arrives. Like, if her car arrives, and then the front door cameras detect motion, "Hey! Mom's Here!" Something like that. And no, I am not a 15 year old kid trying to make sure mom doesn't catch me doing something wrong,  , I'm coming up on 40, and just like when my mom visits. , I'm coming up on 40, and just like when my mom visits.

Are there tutorials out there with how to train a model, or anything about specific colors confirming alerts. Some things are just hard to search for, you know?

|

|

|

|

|

I am new to machine learning and stuff.

I wanted to do custom object detection in C# using webcam at runtime.

The thing is as per client request I did this through roboflow but client wanted fully offline due to no internet and data safety reasons since roboflow did not provide this feature, I looked into yolo. Although roboflow fulfilled object detection but offline feature meant I had to find some other way to do this. To try new method I downloaded small public dataset for balloons from roboflow in yolov5pytorch format. this dataset has blue and red balloons as classes.

Now I just needed to train this and implement it in C# Winform project.

I opened google collab yolov5 to train my dataset, I followed each step and after training I tested it on video in google collab and it was detecting red and blue balloons.

Now my question was how to implement this custom trained model in my C# code for object detection.

Since I have yolov5 custom trained model which tutorial to follow to implement this.

I tried to download yolov5 in onnx format and tried it with ml.net but did not understand,followed the tutorial where the guy was working with custom vision onnx model so followed each step which failed. I do not understand all depth of how it is working.

So now I am stuck with trained model in yolov5 in google colab. What steps should I follow or any tutorial link to integrate this in my c# project?

Also can I use this trained yolov5 model in python and intergrate python script in c#. Remember it has to detect at runtime and offline.

What I have tried:

Tried roboflow (no offline feature)

Trained custom dataset on google colab and downloaded onnx model of yolov5 and implement it in winform project with help of ml.net.

|

|

|

|

|

I don't have one of those new shiny arm64 Windows machines, but I do have an old M1 mac mini. Broadcom, the new owners of VMWare, make it insanely hard to find the VMWare Pro download, but given that VMWare now officially supports Windows on Arm, and provide a free version, it's worth the spelunking.

The server and dev setup are mostly smooth. There's a missing vs_redist that I had to manually install (will need to add that to the setup), but other than that all easy.

We have one fairly major issue with the .NET Object detection module failing to load due to what seems an old .NET bug that's marked as closed. I'll try and spend some time in the next few days hammering away at it.

And, obviously, if anyone has a spare shiny new arm64 Windows laptop they don't need...

cheers

Chris Maunder

|

|

|

|

|

I've installed CodeProject AI 2.6.4 on UBUNTU 22.04.4 LTS

The install went well

I cannot add the License Plate reader Module.

When i click on the install button, i get an error: Error in Install ALPR: 404

Any ideas?

|

|

|

|

|

I tried to install another module and this gave a 404 message, so it's not speicifc to the License Plate module. I tried the 'do not use download cache' option.

modified 2 days ago.

|

|

|

|

|

Are you able to download the module manually?

(If you do manage to do that, you can unzip the ALPR zip into the /modules folder, then open a terminal into the ALPR folder and run bash ../../setup.sh to run the installer)

cheers

Chris Maunder

|

|

|

|

|

That worked perfectly!

|

|

|

|

|

I've been running the codeproject ai server with Blue Iris for many months without issue, but have recently reinstalled it after upgrading to a CUDA supported GPU. It's now completely broken and shuts down shortly after booting.

Here's the log error

2024-05-18 15:13:28: detect_adapter.py: Traceback (most recent call last):

2024-05-18 15:13:28: detect_adapter.py: File "C:\Program Files\CodeProject\AI\modules\ObjectDetectionYOLOv5-6.2\detect_adapter.py", line 12, in <module>

2024-05-18 15:13:28: detect_adapter.py: from request_data import RequestData

2024-05-18 15:13:28: detect_adapter.py: File "../../SDK/Python\request_data.py", line 8, in <module>

2024-05-18 15:13:28: detect_adapter.py: from PIL import Image

2024-05-18 15:13:28: detect_adapter.py: ModuleNotFoundError: No module named 'PIL'

I'm trying to use Object Detection (YOLOv5 6.2) since I have CUDA v11.8 on a GeForce GTX 1050 ti

How do I fix this error?

I should mention that the YOLOv5.NET isn't working either, and that both of these models aren't giving me the option of running them on the GPU.

|

|

|

|

|

Try uninstalling the modules then reinstall using Do not use download cache

|

|

|

|

|

2.6.4 Linux installer on Ubuntu 22.04 is not creating services. Only way I can get it to run is manually with start.sh.

Anyone able to shed light on manual service creation process so I don't need to keep a shell open and it starts at boot?

Thanks

|

|

|

|

|

Figured it out.

/etc/systemd/system/codeproject.ai-server.service had DOTNET_ROOT=/usr/lib64/dotnet, path on my Ubuntu 22.0.4 x64 system was actually /usr/lib/dotnet

Service is operating correctly now.

|

|

|

|

|

I had the same issue. After updating the service file, i needed to enable the service

sudo systemctl enable codeproject.ai-server.service

When i look at the service status i get the following:

~$ systemctl status codeproject.ai-server.service

May 21 13:19:04 nuc codeproject.ai-server[776]: Debug Current Version is 2.6.4

May 21 13:19:04 nuc codeproject.ai-server[776]: Infor *** Server: This is a new, unreleased version

May 21 13:19:07 nuc codeproject.ai-server[776]: Trace face.py: Vision AI services setup: Retrieving environment variables...

May 21 13:19:07 nuc codeproject.ai-server[776]: Debug face.py: APPDIR: /usr/bin/codeproject.ai-server-2.6.4/modules/FacePro>

May 21 13:19:07 nuc codeproject.ai-server[776]: Debug face.py: PROFILE: desktop_cpu

May 21 13:19:07 nuc codeproject.ai-server[776]: Debug face.py: USE_CUDA: False

May 21 13:19:07 nuc codeproject.ai-server[776]: Debug face.py: DATA_DIR: /etc/codeproject/ai

May 21 13:19:07 nuc codeproject.ai-server[776]: Debug face.py: MODELS_DIR: /usr/bin/codeproject.ai-server-2.6.4/modules/FacePro>

May 21 13:19:07 nuc codeproject.ai-server[776]: Debug face.py: MODE: MEDIUM

May 21 13:19:07 nuc codeproject.ai-server[776]: Trace face.py: Running init for Face Processing

Not sure why it states unreleased version?

|

|

|

|

|

Is there a command line to install the code project executable installer for windows silently?

Th goal would be to deploy the install without user prompts.

|

|

|

|

|

Thanks very much for your inquiry. The answer is, there is not a command line to install the CodeProject.AI Server executable installer for Windows silently that has been reliably tested yet. Something to consider for the future.

Thanks,

Sean Ewington

CodeProject

|

|

|

|

|

My use case is that I want to see more details about the detection requests - parameters submitted, origin, etc. I got into CPAI because of Blue Iris but it's working far from how I expect it to work and I would like to understand more where the various requests are coming from, especially related to static object detection which is at best confusing.

|

|

|

|

|

Try the docs

Is there anything specific we can help with?

cheers

Chris Maunder

|

|

|

|

|

I get this when trying to install the Sound Classifier.

it does appear to work, but also have this in log:

08:47:54:SoundClassifierTF: C:\Program Files\CodeProject\AI\modules\SoundClassifierTF\bin\windows\python39\venv\lib\site-packages\scipy\__init__.py:146: UserWarning: A NumPy version >=1.16.5 and <1.23.0 is required for this version of SciPy (detected version 1.26.4

08:47:54:SoundClassifierTF: warnings.warn(f"A NumPy version >={np_minversion} and <{np_maxversion}"

08:47:55:SoundClassifierTF: 2024-05-15 08:47:54.989982: I tensorflow/core/util/port.cc:113] oneDNN custom operations are on. You may see slightly different numerical results due to floating-point round-off errors from different computation orders. To turn them off, set the environment variable `TF_ENABLE_ONEDNN_OPTS=0`.

08:47:57:SoundClassifierTF: WARNING:tensorflow:From C:\Program Files\CodeProject\AI\modules\SoundClassifierTF\bin\windows\python39\venv\lib\site-packages\keras\src\losses.py:2976: The name tf.losses.sparse_softmax_cross_entropy is deprecated. Please use tf.compat.v1.losses.sparse_softmax_cross_entropy instead.

08:47:58:SoundClassifierTF: WARNING:tensorflow:From C:\Program Files\CodeProject\AI\modules\SoundClassifierTF\sound_classification.py:15: The name tf.disable_v2_behavior is deprecated. Please use tf.compat.v1.disable_v2_behavior instead.

08:47:58:SoundClassifierTF: WARNING:tensorflow:From C:\Program Files\CodeProject\AI\modules\SoundClassifierTF\bin\windows\python39\venv\lib\site-packages\tensorflow\python\compat\v2_compat.py:108: disable_resource_variables (from tensorflow.python.ops.variable_scope) is deprecated and will be removed in a future version.

08:47:58:SoundClassifierTF: Instructions for updating:

08:47:58:SoundClassifierTF: non-resource variables are not supported in the long term

08:47:58:SoundClassifierTF: WARNING:tensorflow:From C:\Program Files\CodeProject\AI\modules\SoundClassifierTF\sound_classification.py:16: The name tf.logging.set_verbosity is deprecated. Please use tf.compat.v1.logging.set_verbosity instead.

08:48:01:SoundClassifierTF: 2024-05-15 08:48:01.022855: I tensorflow/core/platform/cpu_feature_guard.cc:182] This TensorFlow binary is optimized to use available CPU instructions in performance-critical operations.

08:48:01:SoundClassifierTF: To enable the following instructions: SSE SSE2 SSE3 SSE4.1 SSE4.2 AVX2 FMA, in other operations, rebuild TensorFlow with the appropriate compiler flags.

08:48:01:SoundClassifierTF: C:\Program Files\CodeProject\AI\modules\SoundClassifierTF\bin\windows\python39\venv\lib\site-packages\tensorflow\python\keras\engine\base_layer_v1.py:1697: UserWarning: `layer.apply` is deprecated and will be removed in a future version. Please use `layer.__call__` method instead.

08:48:01:SoundClassifierTF: warnings.warn('`layer.apply` is deprecated and '

08:48:01:SoundClassifierTF: C:\Program Files\CodeProject\AI\modules\SoundClassifierTF\bin\windows\python39\venv\lib\site-packages\tensorflow\python\keras\legacy_tf_layers\core.py:318: UserWarning: `tf.layers.flatten` is deprecated and will be removed in a future version. Please use `tf.keras.layers.Flatten` instead.

08:48:01:SoundClassifierTF: warnings.warn('`tf.layers.flatten` is deprecated and '

08:48:01:SoundClassifierTF: 2024-05-15 08:48:01.364607: I tensorflow/compiler/mlir/mlir_graph_optimization_pass.cc:388] MLIR V1 optimization pass is not enabled

08:48:02:SoundClassifierTF: C:\Program Files\CodeProject\AI\modules\SoundClassifierTF\bin\windows\python39\venv\lib\site-packages\tensorflow\python\keras\engine\base_layer_v1.py:1697: UserWarning: `layer.apply` is deprecated and will be removed in a future version. Please use `layer.__call__` method instead.

08:48:02:SoundClassifierTF: warnings.warn('`layer.apply` is deprecated and '

|

|

|

|

|

The sound classifier is a Tensorflow 1 project ported (semi-ported!) to Tensorflow 2. Those warnings are just warnings and can be safely ignored. It needs a proper rewriting.

cheers

Chris Maunder

|

|

|

|

|

Hello all, everytime i try to install any of the modules i get a Error in install:404

thought maybe it was a issue with my firewall that im using UFW. im running ubuntu server 24.04

not sure if there is more ports i need to open other then the port that code project uses 32168

Server version: 2.6.4

System: Linux

Operating System: Linux (Ubuntu 24.04)

CPUs: 12th Gen Intel(R) Core(TM) i7-12700K (Intel)

1 CPU x 12 cores. 20 logical processors (x64)

GPU (Primary): NVIDIA GeForce RTX 2060 (6 GiB) (NVIDIA)

Driver: 535.161.08, CUDA: 12.2 (up to: 12.2), Compute: 7.5, cuDNN:

System RAM: 31 GiB

Platform: Linux

BuildConfig: Release

Execution Env: Native

Runtime Env: Production

Runtimes installed:

.NET runtime: 7.0.18

.NET SDK: 7.0.118

Default Python: 3.12.3

Go: Not found

NodeJS: Not found

Rust: Not found

Video adapter info:

TU104 [GeForce RTX 2060] (rev a1):

Driver Version

Video Processor

System GPU info:

GPU 3D Usage 10%

GPU RAM Usage 1.5 GiB

Global Environment variables:

CPAI_APPROOTPATH = <root>

CPAI_PORT = 32168

|

|

|

|

|

Try starting the server directly

sudo bash /usr/bin/codeproject.ai-server-2.6.4/start.sh

cheers

Chris Maunder

|

|

|

|

|

tryed starting codeproject from terminal and still get the same 404 errors

|

|

|

|

|

General

General  News

News  Suggestion

Suggestion  Question

Question  Bug

Bug  Answer

Answer  Joke

Joke  Praise

Praise  Rant

Rant  Admin

Admin

, I'm coming up on 40, and just like when my mom visits.

, I'm coming up on 40, and just like when my mom visits.