Introduction

User input using Mouse Gestures is a technique where the path made up by the mouse rather than, for example, the press of a button initiates an action.

In situations where there is limited space for UI controls or when UI controls would clutter and make the UI unpleasant to look at, Mouse Gestures can be used to optimize the screen usage.

This article aims to describe how to implement a System.Windows.Forms.Panel that can capture and recognize mouse gestures drawn on them regardless of any child components.

Using the Code

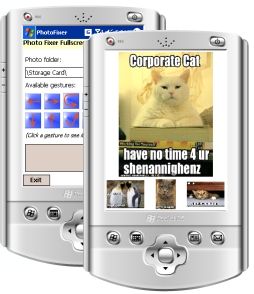

The example application for this article is an application that can be used to browse pictures and reorient them, the application's intended purpose is to fix photos just taken with the camera on a Windows Mobile Device. Mouse Gestures are more useful on a PDA where there is very limited screen space. Because of this there are two sets of source projects included in the download, one for .NET Compact Framework and one for the .NET Framework.

They're marked Device and Desktop.

Requirements

This project set out to implement the following five requirements:

- A

System.Windows.Forms.Panel should be the base control. - All mouse events required should be handled automatically by the base control.

- The gesture path must be completely configurable.

- Mouse Gesture recognition must be fast enough to run on Windows Mobile devices.

- The base control must work regardless of any child controls.

Because this project was mainly targeted for the .NET Compact Framework, some of the requirements I set out to implement differs slightly from what would have been suitable for a desktop environment.

How to Recognize Gestures (Kind Of)

I'm guessing there are several ways to implement a component that recognizes mouse gestures but for this project, in order to comply with requirement 4, I came up with a rather simple and straight forward approach.

Instead of doing some analysis of the path just drawn by the mouse, I decided to use a series of rectangles. The method can check whether a point on the mouse path is inside one of the rectangles. Simple!

But some added sophistication is required, because just checking against a set of rectangles would mean that the method is not able to distinguish between a left-to-right stroke and a right-to-left. By keeping the rectangles in an ordered list it is possible to check whether the mouse path intersects the rectangles in the correct order.

At this point the observant reader probably goes: "Hey, wait! That's not proper mouse gestures!"

And that's absolutely right, as my method is checking for points inside rectangles, it does not actually recognize a gesture but instead it recognizes fixed paths. This is because I wanted the implementation simple and fast and on a PDA, with its small screen and stylus for mouse it does not matter.

What this means is that for a gesture to be recognized it has to start and travel through specific areas on the screen (actually on the MouseGesture panel).

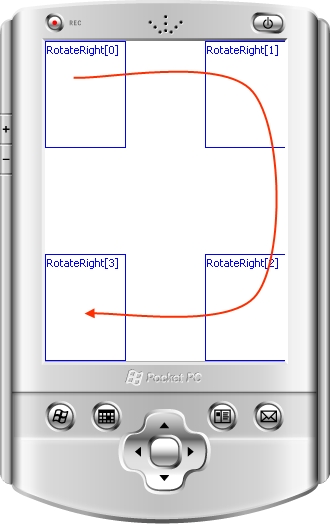

The above image shows a screen shot of a test application running a Bornander.UI.MouseGesturePanel in debug view, which is a mode where instead of rendering the control's child controls it renders the rectangles for each mouse gesture it is set up to recognize.

In this example, only one gesture has been added as recognizable, and the panel renders the rectangle, its name and (in brackets) the index of the rectangle which dictates the order in which it should appear in the gesture. (The red arrow is not rendered by the panel, that's been added afterwards to show the gesture these rectangles are meant to capture).

I'll describe the way to recognize a gesture in more detail later in this article.

Recording a Mouse Path

The first problem to solve is how to record the path the mouse (or indeed stylus) just drew. At first this problem might appear as simple as just recording all the MouseMove events that occur between a MouseDown and a MouseUp event, but because of requirement 5 this will not be possible. Mouse events occur on a per-control-basis, and this causes a problem since a path might start over one control (generating the first MouseDown), move over several other controls (generating a series of MouseMove) and then end when the mouse button is released (generating the final MouseUp event).

To add to this, each control reports mouse events in control local coordinates, this means that the upper left corner of a control is always 0, 0 regardless of where the control resides in its parent control.

To solve this, I implemented a System.Windows.Forms.Panel called Bornander.UI.MouseGesturePanel that hooks mouse listeners recursively to all its child controls and their child controls. That way it can collect any mouse path drawn over it or any of its children.

A method, MouseGesturePanel.Initialize, should be called when all the controls have been added to the panel. This starts the recursion that adds all the mouse event listeners:

public partial class MouseGesturePanel : Panel

{

public event MouseGestureRecognizedHandler MouseGestureRecognized;

#region Private members

private MouseEventHandler globalMouseDownEventHandler;

private MouseEventHandler globalMouseUpEventHandler;

private MouseEventHandler globalMouseMoveEventHandler;

private List<Point> points = null;

private List<Gesture> gestures = new List<Gesture>();

private bool debugView = false;

private List<Control> debugControlStore = new List<Control>();

#endregion

public MouseGesturePanel()

{

InitializeComponent();

globalMouseDownEventHandler = new MouseEventHandler(GlobalMouseDownHandler);

globalMouseUpEventHandler = new MouseEventHandler(GlobalMouseUpHandler);

globalMouseMoveEventHandler = new MouseEventHandler(GlobalMouseMoveHandler);

}

private void AddHandlers(Control control)

{

control.MouseDown -= globalMouseDownEventHandler;

control.MouseUp -= globalMouseUpEventHandler;

control.MouseMove -= globalMouseMoveEventHandler;

control.MouseDown += globalMouseDownEventHandler;

control.MouseUp += globalMouseUpEventHandler;

control.MouseMove += globalMouseMoveEventHandler;

foreach (Control childControl in control.Controls)

{

AddHandlers(childControl);

}

}

public void Initialize()

{

AddHandlers(this);

}

private void FireMouseGestureRecognized(Gesture gesture)

{

if (MouseGestureRecognized != null)

MouseGestureRecognized(gesture);

}

...

}

It is obviously not enough for the MouseGesturePanel just to hook itself to those events, some form of processing has to be done when the events fire.

The first event that fires is the MouseDown, the processing for this is simple, just prepare for handling the MouseMove events. This is done by creating an empty List<Point> to hold the points that will make up the mouse path:

private void GlobalMouseDownHandler(object sender, MouseEventArgs e)

{

if (e.Button == MouseButtons.Left)

points = new List<Point>();

}

The second event to fire is the series of MouseMove events that fire when the mouse is being dragged. As with the first event, processing these are quite simple; just store the position in the list prepared in MouseGesturePanel.GlobalMouseDownHandler.

A static utility method called Utilities.GetAbsolute translates the event control local coordinates that are passed in the MouseEventArgs e argument into MouseGesturePanel local coordinates.

private void GlobalMouseMoveHandler(object sender, MouseEventArgs e)

{

if (e.Button == MouseButtons.Left)

{

Point absolutePoint =

Utilities.GetAbsolute(new Point(e.X, e.Y), (Control)sender, this);

points.Add(absolutePoint);

}

}

The Utilities.GetAbsolute method just iterates from the current control up through the control tree until it comes to the control passed as the last argument and offsets the point with the control's position:

public static Point GetAbsolute(Point point, Control sourceControl,

Control rootControl)

{

Point tempPoint = new Point();

for (Control iterator = sourceControl; iterator != rootControl;

iterator = iterator.Parent)

{

tempPoint.Offset(iterator.Left, iterator.Top);

}

tempPoint.Offset(point.X, point.Y);

return tempPoint;

}

The last event that fires requires a bit more processing, this is because it is not until this point that the MouseGesturePanel is actually trying to recognize a gesture. The MouseGesturePanel iterates through all the Gestures that it has and passes the current mouse path to them to see if there is a match. And if a match is found an event is fired to notify listeners that a gesture has been recognized.

private void GlobalMouseUpHandler(object sender, MouseEventArgs e)

{

if (e.Button == MouseButtons.Left)

{

foreach (Gesture gesture in gestures)

{

if (gesture.IsGesture(points))

{

switch (gesture.EventFireLevel)

{

case GestureRecognizeEventFireLevel.Panel:

FireMouseGestureRecognized(gesture);

break;

case GestureRecognizeEventFireLevel.Gesture:

gesture.FireMouseGestureRecognized();

break;

}

return;

}

}

}

}

The reason for the switch statement in the middle is that I wanted to be able to listen for gesture recognized events on two levels:

- Panel level

- Gesture level

This means that there are two different places and two different ways to hook and listen to these events.

Panel Level

This is the level where it's convenient to hook a gesture recognized listener if the processing that should be done for a gesture is simple. And here simple means few lines of code. This is intended for processing set gestures that are all very simple to process. This means that the same event is be fired for different gestures and the gestures have to be differentiated in the handler method.

Gesture Level

At this level the Gesture fires the event, this means that the event is always fired by the same Gesture.

Example

This is an example using gestures at both levels:

class TestClass

{

private Gesture simpleGestureA;

private Gesture simpleGestureB;

private Gesture simpleGestureC;

private Gesture complexGesture;

public TestClass()

{

MouseGesturePanel mouseGesturePanel = new MouseGesturePanel();

simpleGestureA = new Gesture("A", mouseGesturePanel,

GestureRecognizeEventFireLevel.Panel);

simpleGestureB = new Gesture("B", mouseGesturePanel,

GestureRecognizeEventFireLevel.Panel);

simpleGestureC = new Gesture("D", mouseGesturePanel,

GestureRecognizeEventFireLevel.Panel);

complexGesture = new Gesture("Complex", mouseGesturePanel,

GestureRecognizeEventFireLevel.Gesture);

mouseGesturePanel.MouseGestureRecognized +=

new MouseGestureRecognizedHandler(HandleSimpleGestures);

complexGesture.MouseGestureRecognized +=

new MouseGestureRecognizedHandler(HandleComplexGesture);

}

void HandleComplexGesture(Gesture gesture)

{

if (FooBar.Bar() && Foo.Bar != 0)

{

switch (FooBar.Value)

{

case FooBar.Foo:

FooBar.Foo();

break;

case FooBar.Bar:

FooBar.Bar();

break;

}

}

}

void HandleSimpleGestures(Gesture gesture)

{

if (gesture == simpleGestureA)

FooBar.Foo();

if (gesture == simpleGestureB)

FooBar.Bar();

if (gesture == simpleGestureC)

Foo.Bar();

}

}

Defining a Gesture

A gesture is made up of a name (mostly used for debugging purposes) and a series of rectangles that make up the mouse path. In order to allow for resizing of the MouseGesturePanel the rectangles are not specified in absolute coordinates, but in relative coordinates and these are translated into absolute coordinates when the MouseGesturePanel resizes.

This means that when creating a Gesture and adding the rectangles to it they're added as System.Drawing.RectangleF and all the values (Top, Left, Width, Height) should fall with in the range 0.0 < value < 1.0.

So in order to create a square rectangle that is located at the lower left corner of the screen and covers 1/16th of the screen area, the following code should be used:

Gesture gesture = new Gesture("Test", mouseGesturePanel,

GestureRecognizeEventFireLevel.Gesture);

gesture.AddRectangle(new RectangleF(0.0f, 0.75f, 0.25f, 0.25f));

The Gesture itself automatically adds a listener to its MouseGesturePanel resize event and recalculates the absolute rectangles whenever that panel changes size. Actually recognizing a gesture then becomes as easy as iterating over all the points and checking whether they fall within the rectangles in correct order:

public class Gesture

{

private int GetRectangleIndexForPoint(Point point)

{

for (int i = 0; i < absoluteRectangles.Count; ++i)

{

if (absoluteRectangles[i].Contains(point))

return i;

}

return -1;

}

public bool IsGesture(List<Point> mousePath)

{

if (mousePath.Count > 0)

{

if (GetRectangleIndexForPoint(mousePath[0]) == 0)

{

int currentRectangle = 0;

foreach (Point point in mousePath)

{

int pointRectangleIndex = GetRectangleIndexForPoint(point);

if (pointRectangleIndex != -1)

{

if ((pointRectangleIndex == currentRectangle) ||

(pointRectangleIndex == currentRectangle + 1))

{

if (pointRectangleIndex == currentRectangle + 1)

currentRectangle = pointRectangleIndex;

}

else

return false;

}

}

return currentRectangle == absoluteRectangles.Count - 1;

}

}

return false;

}

}

And that's it, well working mouse gestures. At least on PDAs.

Example Application

The example application I created to test out this implementation is, like I stated in the introduction, an application which you can use to fix the orientation (landscape or portrait) of photos. It allows the user to browse all *.jpg files in a directory and for each picture, the user can rotate it. If happy with the result, after rotation the user can save the picture in its new rotation. Simple, not very useful, but a good testbed for this project since it's the type of application one would not want cluttered with buttons (I think).

The commands, or indeed gestures, available to the user are:

| Gesture | Description |

|---|

| Exit |

| Next |

| Previous |

| Rotate left |

| Rotate right |

| Show preview |

| Hide preview |

| Save |

Final Result

So how did the implementation turn out?

I think I managed to implement all the requirements fairly well, when using the test application it works really good and you quickly become used to the new way of navigating.

What I'm not as happy about is the fact that the method recognizes fixed paths rather than the gestures and this limits the use of it in a Desktop environment.

Future Improvements

I've got some ideas on how to build a dynamic area based on the currently drawn mouse gesture (rather than the MouseGesturePanel client area that would in theory allow the method to detect actual gestures. If I can find the time, I'll try to implement that and update this article with it.

Points of Interest

The images in the example application are rotated using a fast (for the .NET Compact Framework at least) method that I've already described here.

All comments and suggestions are welcome.

History

- 2007-12-07: First version

General

General  News

News  Suggestion

Suggestion  Question

Question  Bug

Bug  Answer

Answer  Joke

Joke  Praise

Praise  Rant

Rant  Admin

Admin

.

.