Introduction

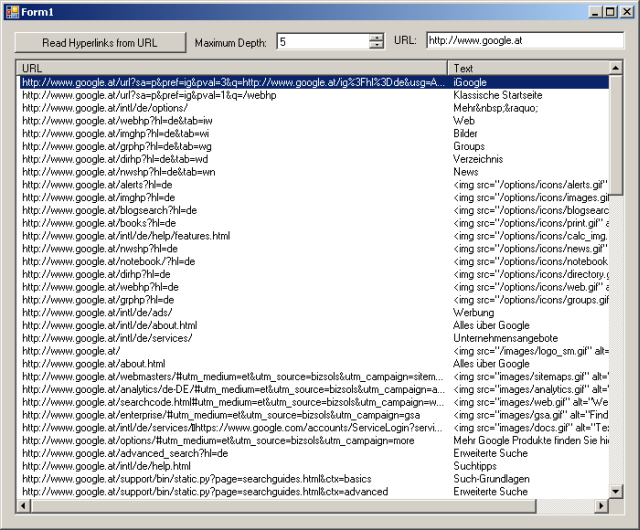

This code parses hyperlinks from an HTML document and follows them recursively, like a Web spider. The sample is embedded into a Windows form containing a list view which shows the parsed hyperlinks.

It doesn't really parse only hyperlinks ("<a href..."). Iframes, Frames and Image Maps are also supported.

This is NOT an introduction to regular expressions!

Background

I needed this code to create a "Google Sitemap" for my own private homepage.

Using the Code

The code supports a maximum depth parameter so that you will not follow too many hyperlinks. It also follows only URLs which are "below" the URL you have specified for the start.

Here's the nearly complete code of this sample, including a lot of comments explaining what it does.

protected Regex m_rxBaseHref;

protected Regex m_rxHref;

protected Regex m_rxFrame;

protected Regex m_rxIframe;

protected Regex m_rxArea;

protected List<string> m_strListUrlsAdded;

protected List<string> m_strListUrlsFollowed;

public Form1()

{

InitializeComponent();

RegexOptions rxOpt = RegexOptions.Singleline |

RegexOptions.Compiled |

RegexOptions.IgnoreCase;

m_rxHref = new Regex("<a[^>]*href=(\"|')(.*?)\\1[^>]*>(.*?)</a>", rxOpt);

m_rxFrame = new Regex("<frame[^>]*src=(\"|')(.*?)\\1[^>]*>", rxOpt);

m_rxIframe = new Regex("<iframe[^>]*src=(\"|')(.*?)\\1[^>]*>", rxOpt);

m_rxArea = new Regex("<area[^>]*href=(\"|')(.*?)\\1[^>]*>", rxOpt);

m_rxBaseHref = new Regex("<base[^>]* href=(\"|')(.*?)\\1[^>]*>", rxOpt);

}

private void btnReadFromUrl_Click(object sender, EventArgs e)

{

Cursor = Cursors.WaitCursor;

lvUrls.Items.Clear();

m_strListUrlsAdded = new List<string>();

m_strListUrlsFollowed = new List<string>();

ReadUrls(tbUrl.Text, tbUrl.Text, ref m_strListUrlsAdded,

ref m_strListUrlsFollowed, (int)numMaxDepth.Value, 0);

Cursor = Cursors.Default;

}

protected void ReadUrls(string strURL, string strStartBase,

ref List<string> strUrlsAdded,

ref List<string> strUrlsFollowed,

int iMaximumDepth,

int iCurrentDepth)

{

if (++iCurrentDepth == iMaximumDepth)

{

return;

}

HttpWebRequest req = null;

try

{

req = HttpWebRequest.Create(strURL) as HttpWebRequest;

}

catch (Exception) { }

if(req == null)

{

return;

}

req.Method = "GET";

HttpWebResponse res = null;

try

{

res = req.GetResponse() as HttpWebResponse;

}

catch (Exception){}

if(res == null || res.StatusCode != HttpStatusCode.OK)

{

return;

}

Stream s = res.GetResponseStream();

StreamReader sr = new StreamReader(s);

string strHTML = sr.ReadToEnd();

sr.Close();

sr.Dispose();

sr = null;

s.Close();

s.Dispose();

s = null;

int iPos, iPos2;

string strBase = req.Address.AbsoluteUri;

iPos = strBase.IndexOf('?');

if(iPos > -1)

{

strBase = strBase.Substring(0, iPos);

}

if(strBase[strBase.Length - 1] != '/')

{

iPos = strBase.LastIndexOf('/');

if(iPos < 0)

{

return;

}

strBase = strBase.Substring(0, iPos + 1);

}

iPos = strBase.IndexOf("://");

if(iPos < 0)

{

return;

}

iPos = strBase.IndexOf('/', iPos + 3);

if(iPos < 0)

{

return;

}

string strBaseHostUrl = strBase.Substring(0, iPos + 1);

Match matchBaseHref = m_rxBaseHref.Match(strHTML);

if (matchBaseHref.Success)

{

string strHtmlBase = matchBaseHref.Groups[2].Value.Trim();

if(strHtmlBase.StartsWith("/"))

{

strBase = strBaseHostUrl + strHtmlBase.Substring(1);

}

else

{

strBase = strHtmlBase;

}

}

Dictionary<string, string> dictHrefs = new Dictionary<string, string>();

MatchCollection matchesHref = m_rxHref.Matches(strHTML);

AddHrefMatches(matchesHref, ref dictHrefs);

MatchCollection matchesFrame = m_rxFrame.Matches(strHTML);

AddHrefMatches(matchesFrame, ref dictHrefs);

MatchCollection matchesIframe = m_rxIframe.Matches(strHTML);

AddHrefMatches(matchesIframe, ref dictHrefs);

MatchCollection matchesArea = m_rxArea.Matches(strHTML);

AddHrefMatches(matchesArea, ref dictHrefs);

foreach (string strUrlFound in dictHrefs.Keys)

{

string strUrlNew = strUrlFound;

if (IsAbsoluteUrl(strUrlNew) && !IsHttpUrl(strUrlNew))

{

continue;

}

if (!IsHttpUrl(strUrlNew))

{

if (strUrlNew.StartsWith("/"))

{

strUrlNew = strBaseHostUrl + strUrlNew.Substring(1);

}

else

{

strUrlNew = strBase + strUrlNew;

}

}

while ((iPos = strUrlNew.IndexOf("../")) > -1)

{

iPos2 = strUrlNew.Substring(0, iPos).LastIndexOf('/');

iPos2 = strUrlNew.Substring(0, iPos2).LastIndexOf('/');

strUrlNew = strUrlNew.Substring(0, iPos2) +

"/" + strUrlNew.Substring(iPos + 3);

}

if (!strUrlNew.StartsWith(strStartBase))

{

continue;

}

if (!strUrlsAdded.Contains(strUrlNew))

{

ListViewItem lvi = new ListViewItem(new string[]{

strUrlNew,

dictHrefs[strUrlFound]

});

lvUrls.Items.Add(lvi);

strUrlsAdded.Add(strUrlNew);

}

if (!strUrlsFollowed.Contains(strUrlNew))

{

strUrlsFollowed.Add(strUrlNew);

ReadUrls(strUrlNew, strStartBase,

ref strUrlsAdded, ref strUrlsFollowed,

iMaximumDepth, iCurrentDepth);

}

}

}

Points of Interest

It was a little bit tricky trying to discover how to handle parent links ("../"), base hrefs, Content-Locations and so on. But now I know a little bit more about this.

History

- 06.07.2007

- Added debug output in a textbox to solve problems

- The reading of the URLs is now done in a separate thread

- 03.06.2007

This member has not yet provided a Biography. Assume it's interesting and varied, and probably something to do with programming.

General

General  News

News  Suggestion

Suggestion  Question

Question  Bug

Bug  Answer

Answer  Joke

Joke  Praise

Praise  Rant

Rant  Admin

Admin