Introduction

This article describes the challenges I faced while programming a simple, concurrent WebSockets server in C++, and concludes with a complete working solution.

WebSockets is an emerging specification currently being standardized along with HTML5, which means that in the near future, more developers will be programming with the WebSockets API. Additionally, concurrent programming is a large topic in programming and tends to be discussed with the client-server model and network programming. To efficiently use computational resources, it's important to understand concurrent programming.

I thought it would be a good programming exercise to create a WebSockets program as an example that could reliably reproduce race conditions. Then afterwards, I'd apply sequence coordination using thread primitives such as Mutex or Semaphore to prevent the race condition. Reading about how race conditions might arise is one thing, but creating situations for race conditions that are reproducible and identifiable is another matter. I found the latter to be more difficult. A web HTTP or WebSockets server is a useful application of multithreading because the call to the Accept method of the sockets (Winsock) interface blocks the thread which it is called on, and moreover, we would want a server to service multiple clients concurrently rather than iteratively (only 1-at-a-time).

Race Conditions

At first, I created textbook scenarios of race conditions, such as incrementing a global integer N times. When it came to actually running a program of M concurrent threads, however, the program would fail to produce a race condition; I would continue to get the same, correct answer on every run (until the N is equal to at least 106). How do you know if a race condition occurred or didn't? You can test this by determining what the correct, expected value of the shared variable should be and then determining whether this property holds when the program runs. This would be like an Assert.AreEqual(expected, actual) if you were unit testing. If we applied this to the textbook example, the expected value would be the integer incremented by N * M.

The variable has to be memory shared across multiple threads. By definition, a variable is shared if and only if multiple threads reference the same instance of that variable (Bryant & O' Hallaron). We can make a variable shared in C++ by declaring it at the file (global) scope. It isn't so easy to always show race conditions with smaller types such as int, but it gets easier when the variable takes up a lot of memory, such as a char* of 512 bytes. Clearly, the larger the memory area in a write operation, the greater the time spent writing, and the greater the chance of overlap between two threads.

To create the desired scenarios, I wanted to create a project with the following attributes:

- In C/C++. I do most of my programming in C#, but I wanted to use C/C++ here to minimize any unexpected consequences of abstractions provided by the .NET Framework. No MFC or CLI is to be used, either.

- The network programming uses Winsock.

- Multithreading uses the CRT, i.e.,

_beginthreadex. - The variable used to create the race condition would be a shared

char* buffer of 512 bytes. Seemed fitting as the buffer idiom is common in network programming. - The server would call

Accept on the main thread and service WebSockets clients on a new thread created for each client that connected. - The thread servicing the client will echo the message back to the client, if no race condition occurred, then the client will receive the exact message it sent. If a race condition did occur, the client will receive a message not equal to the one sent.

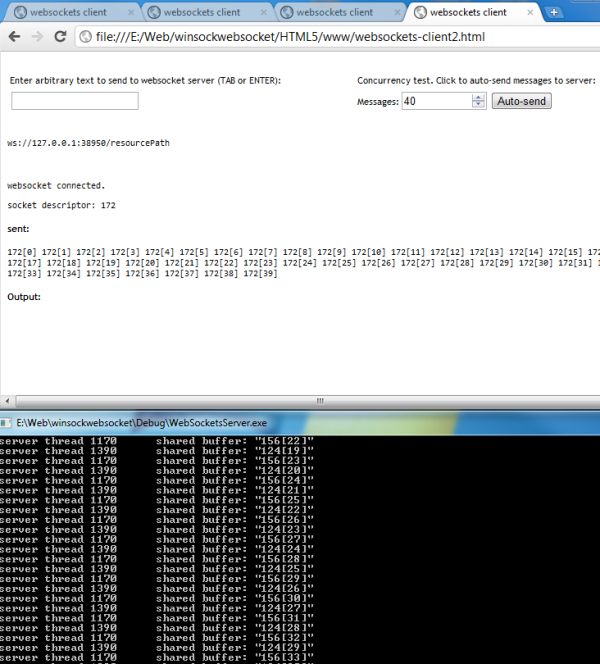

- The clients will run from browser instances of Chrome or Firefox running JavaScript using the native WebSockets API draft 03. When the actual message is not equal to the expected, the client writes this to the output.

- The user can input the message to send, or the JavaScript client can auto-send some number of requests on an interval.

- Each client's request (after the handshake) would write its message to the shared

char* buffer. That is, when the thread which is servicing the client request calls recv, it writes the received message into the shared buffer.

Upon creating the program with the guidelines listed above, I was able to consistently get a race condition on an AMD Phenom II 920 quad-core with only 3 clients sending 12 messages each.

The next task was to prevent the race: synchronize threads and control access to the shared memory by using thread primitives. We might select to use the semaphore which allows some user-specified number of threads to run in a critical region concurrently. In this case, we only want one thread to have access to the shared buffer at any one time, so the semaphore would have to be a binary semaphore, having a maximum value of 1; a value of either 0 or 1. Since we are using a binary semaphore for exclusive access, we call this a Mutex, so we may as well use a Mutex. On the thread which services each client and writes to the shared buffer, we can control access with a Mutex as follows:

do {

acquire_mutex();

received = server_recv(shared_buffer, connectfd, isWebSocket);

printf("server thread %x\tshared buffer: \"%s\"\n", GetCurrentThreadId(), shared_buffer);

server_send(shared_buffer, connectfd, isWebSocket);

release_mutex();

} while (received > -1);

The acquire_mutex, release_mutex functions encapsulate the actual calls to the CRT:

void acquire_mutex() {

if ( WaitForSingleObject(ghMutex, INFINITE) != WAIT_OBJECT_0) {

printf("Error on WaitForSingleObject (thread %x)\n", GetCurrentThreadId());

}

}

void release_mutex() {

if (!isMutexEnabled)

return;

if (!ReleaseMutex(ghMutex)) {

printf("Error releasing Mutex on thread %x.\n", GetCurrentThreadId());

}

}

Deadlocks

Once I introduced the Mutex, I had created a deadlock situation. This is because the call to recv fell within the critical region surrounded by the calls to acquire_mutex and release_mutex. This becomes a deadlock problem if for example, a connection to a client remains open, the client stops sending messages, and then the server thread which last called entered the critical region and acquired the Mutex, called recv on that client's socket. The server thread is blocking on the call to recv, waiting for the client to send a message, while the other threads are waiting for the thread to release the Mutex. This can be solved by moving the call to recv outside the critical region. So we use the do-while loop from earlier as an example:

do {

memset(local_buffer, '\0', BUFSIZ);

received = server_recv(local_buffer, connectfd, isWebSocket);

acquire_mutex();

memcpy(shared_buffer, local_buffer, BUFSIZ);

printf("server thread %x\tshared buffer: \"%s\"\n",

GetCurrentThreadId(), shared_buffer);

release_mutex();

server_send(local_buffer, connectfd, isWebSocket);

} while (received > -1);

Instead of receiving directly into the shared buffer, we refactor the code a bit, taking one extra step of indirection to receive the data into a local buffer. Then we copy the data from the local buffer, and write it to the shared buffer in one call to memcpy. This call is located in the critical region that's surrounded by the calls to acquire_mutex, release_mutex. A general lesson I learned there was, to avoid deadlocks, only make a call to acquire or release on the smallest region of code possible, and furthermore, don't include any blocking calls within the critical region, if possible.

Running the the demo project:

The WebSockets JavaScript client is run in a browser and sends the server a message input by the user, or auto-generates a user-specified number of messages to send. For each message sent, the JavaScript client will assert that the message echoed back from the serer is equal to the one sent. The program is contained within the following files:

- websockets-server-race-conditions+deadlock.cpp: Creates a deadlock if a client browser instance opens a socket then stops sending messages. This is because the server thread servicing that WebSocket is blocking on the call to

recv and has acquired the mutex, blocking all other server threads. - websockets-server-race-conditions.cpp: The mutex can be disabled, and if it is, the WebSockets client receives a message not equal to the one sent.

- websockets-client.html: The HTML page that contains the WebSockets client (in JavaScript) to run in a browser.

Instructions to run the demo:

- Run the WebSockets server C++ program in Visual Studio 2010. Select one of the two .cpp programs/scenarios to run (described above).

- Next, view the client HTML page, websockets-client.html, in multiple instances of Chrome or Firefox 4-5.

You can also find the latest version of the project at http://winsockwebsocket.codeplex.com/. Future plans are to work on the memory deallocation.

Overall I found this to be a good learning exercise in network programming and multithreading concepts, and thought that this project may serve as a useful learning tool to others.

This member has not yet provided a Biography. Assume it's interesting and varied, and probably something to do with programming.

General

General  News

News  Suggestion

Suggestion  Question

Question  Bug

Bug  Answer

Answer  Joke

Joke  Praise

Praise  Rant

Rant  Admin

Admin