Introduction

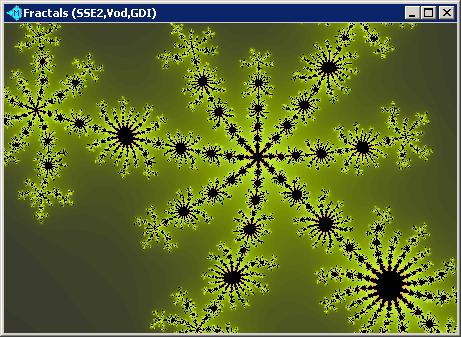

You probably have heard about fractals before. They are beautiful pictures such as the one shown above. Any fractal can be described using iterative formulas. So you can generate a fractal by evaluating these formulas and finding the color of each pixel. That is a large computational task, and drawing a fractal needs a fast CPU and a carefully optimized program.

Fractals and their properties are studied in non-linear dynamics. Besides the scientists, there are people who like to explore fractals simply because the pictures are graceful and quaint.

I will skip the mathematical background in this article. You can find necessary explanations here:

The last three articles also provide some C code for fractal generation. If you are new to fractals, I recommend you read the articles by Fabio Cesari. His explanations are simple and clear, with tables and images that are worth a thousand words.

My Goals and Review of Several Fractal Generators

I had to find or develop educational software for learning fractals and their properties. The software will be used to show students what Mandelbrot and Julia sets are, and will give them some exercises. The students are expected to work with the program only for one lesson, thus it needs to be simple and intuitive. The main features are the following:

- generating Julia and Mandelbrot sets using a fast SSE-driven algorithm;

- viewing the orbit of the function (i.e., the sequence of iterations) as a graph and as a table;

- showing axes and coordinates of any point;

- easy zooming (left click to zoom in, right click to zoom out or something similar);

- generating the Julia set with the C parameter that corresponds to the point of the Mandelbrot set.

The last point means you can pick the C value for your Julia set from the image of the Mandelbrot set. You simply hold the mouse button, move the cursor over the Mandelbrot set, and the Julia set changes as you do it:

I will call this PnP mode (Picture iN Picture) for short.

And a few words about non-goals, namely the things that I am not going to implement:

- fancy colors and palettes (if I did this, the students would get focused on coloring schemes instead of math);

- any types of fractals other than Julia and Mandelbrot;

- support for 3DNow (SIMD extensions found in old AMD processors; modern Athlon XP uses SSE);

- porting to other OSes and processors (x86 and Win9x/XP were just enough for me);

- multiprocessor support.

My first try was Chaos Pro. It's a complicated freeware application that can generate Julia and Mandelbrot sets and a lot of other fractals. You can even add your own fractal formulas using the built-in programming language. While the program is able to create cool 3D images and animations, use different palettes, etc., it still lacks some essential features. First, you cannot view the orbit and axes. Second, the Mandelbrot set could be generated in about 1.6 seconds on my Pentium M 1.5 GHz laptop, and the Julia set with C = -0.12+0.74i takes 1.4 seconds to draw. Chaos Pro supports PnP mode, but it's inconvenient to use because the rendering is so slow. Fractals cannot be fully rendered in real time (you can't get the picture changing instantly as you move the cursor). Third (and it was the main reason for me), I often got "Access violation" error or other bugs with Chaos Pro. Certainly, it's okay for the freeware application written by a hobbyist, but I wanted something more reliable.

Then I came across FracInt, an good old DOS program for drawing fractals. It has been developed several years ago, and a 20.0 version of this application is available now. The program is quite sophisticated and powerful, with a lot of intriguing features such as ability to view several dozens of fractal types, play fractal music, use the PnP mode, and create stereoscopic images. Its main disadvantage is the outdated user interface. Zooming takes a lot of time: you drag the mouse to set the desired rectangle size, then move it to the point and, finally, you click twice (left button to zoom in, right button to zoom out). This was unacceptable for me, because an average student will spend half of the lesson comprehending how to use this zooming interface. Video modes up to 1600x1200 are available (1024x768 VESA should work with most of the video cards), but when I ran FracInt in Windows XP and pressed Alt+Tab, my video driver crashed. The output to the sound card didn't work, and I had to use my PC speaker (does anybody still remember this infamous squealer? ;). Needless to say about missing MMX or SSE support in this old application.

After several hours of web-surfing, I discovered Fast Floating Fractal Fun a.k.a. FFFF. It's a very fast Mandelbrot set generator supporting SSE, SSE2, 3DNow and GPU Vertex Programs. If I had known about it before, I would not have started developing my own fractal rendering engine :). Though, FFFF has not much features besides zooming and switching between different calculating engines, so if you decide to use it, you will need to add a Julia set generator and develop a more elaborate GUI.

The Algorithm

Here is the algorithm for generating the Mandelbrot set in pseudocode:

complex P, Z;

for each point P on the complex plane {

Z = 0;

for i = 0 to ITER {

if abs(Z) > 4.0

set point color to palette[i];

break the inner loop and go to the next point;

Z = Z * Z + P;

}

set point color to black;

}

P and Z are complex variables. You can read general explanations about complex numbers in the articles mentioned above.

For every pixel P of the image, the loop is repeated ITER times. So it takes O(X*Y*ITER) time to execute, where ITER is the number of iterations, and the image has a dimension of X x Y pixels.

abs(Z) is a function that calculates the absolute value of a complex number. Imagine a circle with radius 2.0 and the center in the origin. If the point Z is outside of this circle, abs(Z) will be more than 4.0. In this case, the inner loop should be terminated, because you know that every point of a Mandelbrot set lies inside the circle. And if Z has gone outside the circle, the point P doesn't belong to the Mandelbrot set. Let's mark this point with a color that shows how fast it escapes the circle when you iterate the Z = Z * Z + P calculation. The number of complete iterations, i, shows this. Thus you take the i-th color from some palette and continue from the next point. The palette is simply an array of colors. For example, the points that go outside the circle after the first iteration are marked with dark green color; the points that leave the circle after the second iteration are marked with light green and so on.

Otherwise, if the loop has been executed for ITER times and the point Z has never gone outside the circle, then the point belongs to the Mandelbrot set. Let's paint it with black color.

The inner loop repeatedly evaluates Z<SUB>N+1</SUB> = Z<SUB>N</SUB><sup>2</sup> + P, the iterative formula for the Mandelbrot set. Z is initialized to zero, but it's easy to see that you can set Z = P, because Z<SUB>1</SUB> = Z<SUB>0</SUB><sup>2</sup> + P = 0<sup>2</sup> + P = P.

Using a palette is the easiest and the fastest way to color in the Mandelbrot set, but the resulting image will have only ITER+1 colors. There are methods that can render 24-bit images. For example, ChaosPro uses this formula:

color_index = (i - log(log(abs(Z))) * 1.442695 + 2) / 252.0;

Coefficients 252.0 and 2.0 scale down the color_index value to fit into a 0..1 range. Then, the color_index is translated into a 24-bit color. Unfortunately, calculating the logarithm is a slow operation that it will add about 30-50% to the overall execution time. That's why I sacrificed the 24-bit color for the sake of speed and chose the limited palette.

To translate the algorithm into C code, you have to remember the formulas for multiplying complex numbers and finding their absolute values (I hope you didn't miss math lessons at college :).

void PaintMandelbrotFPU(int w, int h, long* b, double left, double top,

double right, double bottom) {

int x, y, i; double x2, y2, px, py, zx, zy, dx, dy;

dx = (right - left) / w;

dy = (bottom - top) / h;

py = top;

for(y = 0; y < h; ++y) {

px = left;

for(x = 0; x < w; ++x) {

zx = px, zy = py;

for(i = 0; i < ITER; ++i) {

x2 = zx * zx, y2 = zy * zy;

if(x2 + y2 > 4.0)

break;

zy = zx * zy * 2 + py;

zx = x2 - y2 + px;

}

*b++ = a[i];

px += dx;

}

py += dy;

}

}

The complex variable p has turned to px (the real part) and py (the imaginary part). The same happens for zx and zy. Squares of zx and zy are used twice: in the absolute value calculation and in finding z * z + p. That's why I made two variables x2 and y2 for them. Variables zx and zy are initialized to px and py instead of zeros, because then you will save time by skipping the 0*0 multiplication.

Note the integer variables x and y that counts the current pixel coordinates, and the pair (px, py) which are coordinates on the complex plane. px and py are increased independently of x and y. This improves performance dramatically, because you don't need to translate screen coordinates (x, y) to complex plane coordinates (px, py) each time the loop is iterated. The price for such a performance improvement is the loss of precision. Floating point numbers have limited precision, and errors are accumulated during multiple additions: px += dx, py += dy. This causes an unpleasant effect: when you zoom in the picture, it will "jerk". An alternate solution could be calculating px = (right - left) * x / w, py = (bottom - top) * y / h at each iteration, but this seems to be impractical, because the division has a long latency.

Finally, a is my palette, and b is a bitmap being generated by the function. The bitmap b is later blitted to the screen with DirectDraw or GDI functions. Details of this process are unimportant for us now. Just remember that the size of b is w * h pixels, and you need to fill it with colors from the palette.

When the inner loop is terminated using a break, i has a value below ITER. And when it's not terminated, i == ITER after the loop. With this property, I did a nasty trick by creating the array a[ITER+1] and making the last element of this array equal to the color black. After the loop, a[i] refers to black in a[ITER]. A more strict way to do this would be:

for(i = 0; i < ITER; ++i) {

x2 = zx * zx, y2 = zy * zy;

if(x2 + y2 > 4.0) {

*b++ = a[i];

px += dx;

goto NEXTPIXEL;

}

zy = zx * zy * 2 + py;

zx = x2 - y2 + px;

}

*b++ = RGB(0,0,0);

px += dx;

NEXTPIXEL:

The algorithm for generating a Julia set is very similar to the one for the Mandelbrot set, so I will not show it here. The main difference is the introduction of the complex parameter (PX, PY) in Julia.

MOV-ADD method

I did a little profiling and found that the PaintMandelbrotFPU function takes about 95%-99% of the overall execution time. Painting takes only 5% with GDI or 1% when using DirectDraw. Thus it's much more important to optimize this function than any other part of the program.

One of the best ways to do such optimizations is using SSE (Streaming SIMD Extensions). These instructions allow you to calculate four pixel values at the same time. So, at least in theory, the speed will be improved by a factor of 4 (the real figures are about 3.2-3.3). You can find more information about SSE in the Intel Architecture Software Developer's Manual, volume 1, chapter 10, and the AMD64 Architecture Programmer's Manual, vol. 1, chapter 4. In further explanations, I will assume you know the basics of SSE and x86 assembler programming.

There are a lot of different ways to arrange SSE instructions for this function, and some of these ways are faster than others. Here is a very fast sequence for the Pentium IV processor. I call it the MOV-ADD method:

MOVAPS xmm0,xmm4

XORPS xmm6,xmm6

MOVAPS xmm1,xmm5

mov ecx, ITER

ILOOP:

MOVAPS xmm2, xmm0

MULPS xmm0, xmm0

MOVAPS xmm3, xmm1

ADDPS xmm1, xmm1

MULPS xmm1, xmm2

MOVAPS xmm2, xmm0

MULPS xmm3, xmm3

ADDPS xmm1, xmm5

SUBPS xmm0, xmm3

ADDPS xmm2, xmm3

CMPLEPS xmm2, xmm7

ADDPS xmm0, xmm4

MOVMSKPS eax, xmm2

test eax, eax

jz EXIT

ANDPS xmm2, xmm7

ADDPS xmm6, xmm2

sub ecx, 1

jnz ILOOP

EXIT:

Only the inner loop is shown here. By the start of the loop, xmm0 and xmm1 contain px and py, the initial values for zx and zy. Registers xmm4 and xmm5 store px and py (both of them are loop invariants). The register xmm7 contains the constant 4.0 in all doublewords.

The program starts by saving xmm0 and xmm1 in temporary registers xmm2 and xmm3. Then zx is squared, and zy is doubled by adding xmm1 to itself. Other steps of calculation are shown in the comments above.

The register xmm6 holds the number of complete iterations. Note that the number in xmm6 should be counted independently for each of the 4 pixels. The CMPLEPS instruction puts a bit mask into the register xmm2, and the sequence MovMskPS-test-jz checks whether all 4 pixels have gone outside of the circle with a radius 2.0. If this happens, the loop is terminated. In other cases, the program should increase the number of complete iterations for the pixels that are still inside the circle. The mask is then ANDed with 4.0 to get 4.0 in the doublewords corresponding to such pixels, and 0.0 in other doublewords. This value is added to xmm6. In short, when the loop ends, xmm6 will contain 4.0 * number-of-complete-iterations for each of the 4 pixels. The value will be later used as an offset into our palette.

Now let's calculate the execution time for Pentium IV and find the bottlenecks in this code. The manual by Anger Fog describes how to perform such an analysis; if you are new to optimization for Pentium IV, please read the manual. See also official documents from Intel: Intel Architecture Optimization Reference Manual and Microarchitecture of the Pentium 4 Processor.

Firstly, memory access is not a bottleneck here, because the calculation takes much more time than writing to memory. For the usual resolution 1024x768, the program writes 1024*768*4 bytes = 3 Mb. Typical throughput for modern DDR memory may be about 1-2 Gb/s. A rough estimation shows that the memory access will take 1.5-3 milliseconds, while the real execution time for this code is 70-80 ms. An analysis of the code gives the same result: memory is accessed only twice for 1 pixel (see the code in the next chapter), but the inner loop is executed ITER times (typically 64 times or so), and it includes SSE instructions with long latencies.

That's why different tricks for optimizing cache performance are of no use for this code. The same for instruction decoding: the loops are so small that they fit into the trace cache entirely, and they are decoded only once. The real bottleneck is the long latencies and limited throughput of SSE instructions.

For simplicity sake, let's presume that the pipeline is initially empty, and the whole code is in the trace cache. Register names xmm1, xmm2, etc., are shortcuts to x1, x2 to fit into the picture.

The first three instructions (MOVAPS x2, x0, MULPS x0, x0, and MOVAPS x3, x1) are sent from the trace cache into the op queue. The FP/SSE execution cluster has two ports: one for add, mul, div operations, called fp unit in Agner Fog's manual, and one for moves between registers and stores (the mov execution unit). These two units can start executing the MOVAPS and MULPS instructions at the same time.

At the second clock cycle, the next three instructions go to the queue. From them, MOVAPS x3, x1 and ADDPS x1, x1 can execute in parallel. The reciprocal throughput for the MOVAPS instruction is 1, thus the second instruction can begin at this cycle.

The MOVAPS instruction has a latency of 6 clock cycles, and MULPS x1, x2 depends on the results of MOVAPS x2, x0 (dependency is shown with arrows on the chart). So MULPS can start at the sixth cycle (by this time, the queue should be full of ops waiting to execute).

The next instruction, MOVAPS x2, x0, depends upon MULPS x0, x0. The MULPS instruction also has an additional latency of 1, meaning it needs to wait for 1 extra clock cycle if the next op goes to a different execution unit. MULPS and MOVAPS are from different execution units fp and mov, and I have to insert a delay of one cycle between them.

Now, the MULPS x3, x3 instruction. The multiplication subunit (FP multiplier in terms of Intel) can execute one multiply every two cycles, thus the second MULPS instruction has to wait for one cycle. It will be started at the eighth cycle.

The next five instructions go to the FP addition subunit (FP adder). All of them have a latency of 4 clock cycles. ADDPS x1, x5 can begin at the twelfth cycle, when the results of the MUL x1, x2 multiplication will be ready. The same for SUBPS x0, x3; it starts at the fourteenth cycle. The ADDPS x2, x3 instruction could begin earlier than at the sixteenth cycle, but the FP adder throughput limits the number of simultaneously running instructions to 2. The sequence SUBPS x0, x3; ADDPS x2, x3; ADDPS x0, x4; CMPPS x2, x7 perfectly matches this requirement of the FP adder. The two neighboring instructions are from different dependency chains, thus they are fully independent and can execute in parallel.

Our purpose is to complete the x0 = x0 ^ 2 - x1 ^ 2 + x4, x1 = 2 * x0 * x1 + x5 calculation as soon as possible. On the chart, the instructions that compute the new values for x0 and x1 are marked with different shades of gray, and non-relevant instructions are white. You need to schedule the "gray" instructions earlier than others because the next iteration of the loop can't start without the new values for x0 and x1. The ANDPS and MOVMSKPS instructions from the previous iteration can execute in parallel with the next iteration, provided that the jump will be predicted correctly. So you can schedule them later than gray instructions.

Time measurements I made confirmed these considerations. There is now a long delay between MOVAPS x2, x0 and ADDPS x2, x3 (see the chart). But if you try to put ADDPS x2, x3 earlier than SUBPS x0, x3, the overall execution time will increase. This means the delay doesn't matter as long as x0 and x1 are calculated early.

You see that the speed of the code depends on both the instruction latencies and the throughput, because there are three quite independent chains, which can execute at the same time. The critical path is marked with dark gray color. MOVAPS adds an unnecessary delay (may be, if x86 architecture had three-address instructions like RISCs, the code would have execute faster, who knows). The subunits are not utilized fully. At the first stage of the loop (cycles 0-12), a great load is put on the MOV unit and the FP multiplier; later, in cycles 14-22, the FP adder is overloaded, and the FP multiplier is not used at all. You could take instructions from two iterations and shuffle them in order to get more equable load, but you need more SSE registers for this. With AMD64 (or Intel EM64T), 8 additional registers are available, so the code can be further optimized in 64-bit mode.

After drawing the chart, I ordered the instructions by their start time. For example, MOVAPS x2, x0 and MULPS x0, x0 will be the earliest started instructions, so I put them first in the code. Then MOVAPS x3, x1 and ADDPS x1, x1 begin, and they are the next instructions in the source code. Ordering the instructions reduces the number of ops waiting in the queue. Now there is no need for your processor to reorder the instructions at run-time: they are already ordered in accordance with their latencies, throughput, and dependencies.

But what about branch prediction in this code? Can it be a bottleneck? In fact, the penalties for mispredicted branches take a lot of execution time for this program. But the number of complete iterations is the result we want to obtain. You can't perfectly predict how many times the loop will be executed, because this value is itself the result of the program. If you know exactly how many times the loop is repeated for each pixel, then why are you running this program? :)

I've randomly selected two close-up pictures of the sets, then calculated the distribution of the iteration's number for them and for one large-scale image:

The blue bars denote the large-scale image of the Mandelbrot set; the red and yellow bars show two close-up pictures. ITER is equal to 64 in my program, so the chart shows the frequencies of the points from 0 to 5 iterations, from 6 to 11 iterations, and so on to 60 iterations and more. Obviously, the minimal and maximum numbers of iterations constitute the largest parts of the picture. For the blue bar, the minimal value is 0-5 iterations (59% of all pixels); for the red bar, it's 12-17 (43%); and for the yellow bar, it's 30-35 (8%) and 36-41 (15%). The minimal number of iterations corresponds to the vast background area filled with one color, and the maximum number of iterations means the black points that belong to the set. Thus, 26% of the pixels belong to the Mandelbrot set on the large picture, and 21% and 62% of the points belong to the set on the two close-ups.

From this analysis, you can see that if the loop is terminated, there is a high probability that it will be terminated early, at the minimal number of iterations. But if the loop is not terminated early, it usually will not be terminated at all. This statistics is interesting, but I couldn't make use of it in the code. May be, you, the reader, can do it. Any suggestions will be appreciated.

Let's make some conclusions about this MOV-ADD method:

- The general strategy is to calculate the values that will be needed in the next iteration (in our case,

x0 and x1) as early as possible. These calculations constitute a critical path of the program, and the overall execution time depends on their finish time.

- By scheduling independent instructions to execute simultaneously, I tried to improve the instruction-level parallelism (ILP). For example, the first four instructions execute addition, multiplication, and two moves in parallel.

- The instructions are ordered by their start time, which helps to reduce the length of the op queue.

FFFF method

FFFF v3.2.2, the Mandelbrot set generator mentioned above, uses another method. FFFF is open-source, so it is fully legal to publish its code here:

MOVAPS xmm0,xmm4

XORPS xmm6,xmm6

MOVAPS xmm1,xmm5

mov ecx, ITER

ILOOP:

MOVAPS xmm2, xmm0

MULPS xmm2, xmm1

MULPS xmm0, xmm0

MULPS xmm1, xmm1

addps xmm2, xmm2

movaps xmm3, xmm0

addps xmm3, xmm1

cmpltps xmm3, xmm7

movmskps eax, xmm3

test eax, eax

jz EXIT

subps xmm0, xmm1

movaps xmm1, xmm2

ANDPS xmm3, xmm7

ADDPS xmm6, xmm3

ADDPS xmm0, xmm4

ADDPS xmm1, xmm5

dec ecx

jnz ILOOP

EXIT:

FFFF tries to compare x0^2 + x1^2 with 4.0, and only if the comparison fails, it finds new values for x0 and x1. If the comparison returns true, the loop is terminated, and there is no need to calculate x0 and x1. Although this may seem a good strategy, my experiments show it is not. Look at the long chain: MOVAPS xmm3, xmm0; ADDPS xmm3, xmm1; CMPLTPS xmm3, xmm7; MOVMSKPS eax, xmm3. When this chain runs, there are a lot of possibilities to utilize free execution units and to run the second chain in parallel. But FFFF authors didn't insert any instruction between the instructions of this chain. The scheduler has to reorder instructions itself. Sometimes, it fails, and the execution units are left unused. For example, there is so large a distance between MULPS xmm1, xmm1 and SUBPS xmm0, xmm1 in the code that the second instruction may be not sent to the queue in time. In this case, an additional delay should be added between those instructions. The same for ADDPS xmm2, xmm2 and MOVAPS xmm1, xmm2: there is a small chance that they will be executed without a delay.

The chart shows the ideal case without any delays. Even then x0 takes 20 clock cycles to calculate, and x1 takes 27. For my MOV-ADD method, the figures are 16 and 22 clock cycles, respectively. The critical path (marked with dark gray color) includes two MOVAPS instructions having long latencies, while MOV-ADD method uses only one MOVAPS on its critical path.

There are additional latencies before MOVAPS x1, x2 and MOVAPS x3, x0, because the previous instruction was executed in a different unit. The ADDPS x3, x1 is put off for one cycle due to the limited throughput of the FP adder.

In any other way, MOV-ADD is similar to FFFF. Both methods use SSE to calculate 4 pixel values together, both have the same limitation of precision and the same jerking effect when zooming. I used a similar algorithm, but arranged instructions differently to improve performance. Other distinctions between FFFF and my program will be shown later.

In the FFFF code above, the register names are changed to the names used in MOV-ADD (xmm0 for zx, xmm1 for zy, and so on). This change doesn't affect performance, but makes the methods' code easier to compare.

Vodnaya method

I also developed Vodnaya, a method optimized for Pentium M. The name is fully meaningless, so if it's hard for you to pronounce Russian words, you can just call it Vod-method or V-method ;). It outperforms both MOV-ADD and FFFF methods on Pentium M and Pentium III. Only a little is known about the Pentium M microarchitecture, so I was unable to do an analysis of latencies and throughput. After much trial and error, I found a sequence of instructions that seemed to be the fastest on these processors. The method is also a champion in the number of instructions: it is one instruction smaller than MOV-ADD or FFFF.

MOVAPS xmm0,xmm4

XORPS xmm6,xmm6

MOVAPS xmm1,xmm5

mov ecx, ITER

ILOOP:

MOVAPS xmm2, xmm1

MULPS xmm2, xmm2

MULPS xmm1, xmm0

MOVAPS xmm3, xmm2

MULPS xmm0, xmm0

ADDPS xmm2, xmm0

CMPLEPS xmm2, xmm7

ADDPS xmm1, xmm1

SUBPS xmm0, xmm3

ADDPS xmm1, xmm5

ADDPS xmm0, xmm4

MOVMSKPS eax, xmm2

test eax, eax

jz EXIT

ANDPS xmm2, xmm7

ADDPS xmm6, xmm2

sub ecx, 1

jnz ILOOP

EXIT:

The zx^2+zy^2 sum is calculated in the temporary register xmm2. zy^2 is copied here to save it from being overwritten when the program will subtract zx^2-zy^2. New values for zx and zy are computed in place, in the xmm0 and xmm1 registers. I guess the whole method is fairly graceful. Unfortunately, it's not the fastest one for Pentium-IV and Athlon XP processors.

The Great Battle: MOV-ADD vs. FFFF vs. Vodnaya

And now, the speed comparison of these three methods:

In the set of tests, the dimension of the image was 1024x768 pixels; the time was measured with the RDTSC instruction (see the source code in the SSEroutines_test.asm file). I repeated the tests five times and took the minimal result for each method. Times in the chart are expressed in millions of clock cycles. The lower times are better. After the names of processors, their CPUID signatures are shown. For example, Celeron F13 is a family 15, model 1, stepping 3 processor, namely a trimmed down Pentium IV. Its caches are smaller than those of Pentium IV, but the core is the same, that's why the results of this Celeron should be almost equal to the results of Pentium IV (sorry, I was unable to get Pentium IV for the tests). "Rabbit" means Julia set with C = -0.12+0.74i.

Evidently, MOV-ADD is the best method for Pentium IV and Athlon XP processors. Vodnaya shows good results on Family 6 processors from Intel (in particular, Pentium III and Pentium M). FFFF is definitely a loser on every type of processor. The speed improvement of MOV-ADD in comparison to FFFF is about 12% on Pentium IV, and the difference between Vodnaya and FFFF is 7-11% for Pentium M and Pentium III.

The readymade program available uses Vodnaya on family 6 processors from Intel and MOV-ADD method on other processors. The method is selected at run-time using the processor signature retrieved with the CPUID instruction.

CvtTss2si

After finishing the calculation, the program should translate the results into color values. The single line of code (*b++ = a[i];) can make the translation in C, but in assembler language, you have to do more work.

The program initializes the xmm6 to zero and adds 4.0 to it at each iteration (see the code of any method above). If the inner loop is not terminated, it is repeated ITER times. When the loop is over, the number of complete iterations is multiplied by 4 in the xmm6 register provided that the point doesn't belong to the Mandelbrot set, and there is the ITER * 4 value there otherwise. The same trick with the last element of array can be done: add one element to the array and make its value equal to black. By doing so, you can use one coloring code to handle points that are in the Mandelbrot set and the points that are not. FFFF v3.2.2 handles these types of points differently using a conditional move (it's a slower way, and the CMOV instruction is not supported on some old AMD processors).

Note that the xmm6 register holds the number of complete iterations multiplied by 4. FFFF divides it to get the index in the palette. For division, it uses the shr instruction, which is slow on Pentium IV processors with the model number lower than 3. But one element of the palette takes 4 bytes in memory. Thus FFFF divides the number by 4, and then multiplies it by 4 to get the memory offset. What a terrible waste of time! :) I do this in a much simpler way:

CVTTSS2SI eax, xmm6

mov eax, [esi + eax]

mov [edi], eax

The CvtTss2si instruction converts, with truncation, a single-precision floating-point value to an integer value, in the eax register. The number of iterations is, obviously, an integer number. It will be converted without rounding, that's why the rounding mode is unimportant here. The next instruction extracts the color from palette, and the last one writes the color into the bitmap. The bitmap may be located in the video memory or in the RAM (don't care about this for now).

There are other instructions for converting floating-point numbers (namely, CvtPs2Pi and CvtPs2Dq), but none of them write the result to general-purpose registers. You have to store the number in memory and immediately read it from the same location, which can cause additional delays on a Pentium IV. I tested the code with CvtPs2Dq and found that it is not faster than the code using CvtTss2si.

The Power of FASM Macros

Writing several variants of the same code for the Mandelbrot set and for the Julia set, for black and white image and for color image, for SSE and for SSE2, requires a lot of routine work. Thankfully, most assemblers have some special tools that can do this work for you. The tools are macroinstructions and conditional assembly. Macros let you repeat the sequence of instructions with different parameters, and conditional assembly allows inserting instructions depending on these parameters.

I chose FASM 1.62 from several assemblers, because it is tiny, fast, and has a rich macro syntax. The FASM manual describes the syntax in detail. Here is a small piece from the Vodnaya method to illustrate the use of macros in my program:

macro JuliaMandelPaint color, type {

ADDPS xmm1, xmm1

SUBPS xmm0, xmm3

if type = Julia

ADDPS xmm1, dqword[cy1]

ADDPS xmm0, dqword[cx1]

else if type = Mandel

ADDPS xmm1, xmm5

ADDPS xmm0, xmm4

end if

}

mov eax, [fractaltype]

test eax, eax

jz MANDELBROT

JULIA:

JuliaMandelPaint 1, Julia

ret

MANDELBROT:

JuliaMandelPaint 1, Mandel

ret

If the argument type is equal to Julia, FASM will generate the code that adds cx1 to xmm0 and cy1 to xmm1, else it will use the values from the registers xmm4-xmm5. Both variants of the code will be generated: the first at the label JULIA, and the second at MANDELBROT. You just check the variable fractaltype and decide which variant to use.

You could say that there is an easier way to do this:

mov eax, [fractaltype]

test eax, eax

jz MANDELBROT

ADDPS xmm1, dqword[cy1]

ADDPS xmm0, dqword[cx1]

jmp NEXT

MANDELBROT:

ADDPS xmm1, xmm5

ADDPS xmm0, xmm4

NEXT:

Why not use this code? Because the check is located in deeply nested loops, and the comparison will be repeated several million times, while it needs to be done only once. Needless to say about delays that can occur if the conditional jump will be mispredicted... So it's advantageous to move the comparison out of the loop. Macros help to write this shortly.

The next interesting task is adapting the methods for SSE2. SSE2 instructions operate on two double-precision values in parallel. In other aspects, they have no difference from SSE. Even the latencies and throughputs for SSE2 are the same as for SSE.

So you have to do a massive search and replace to change ADDPS to ADDPD, MULPS to MULPD, MOVAPS to MOVAPD, etc. Then you adjust pointer increments and get two functions: one for SSE and one for SSE2. This approach is used in FFFF and several other programs. There is one serious inconvenience with it. When you want to update the program, you have to change the same code in both functions. It's tedious and frustrating. Sometimes you correct one function, but forget to change another.

The idea is to create a macro called MOVAP that will be replaced with MOVAPS for SSE and MOVAPD for SSE2. Now, instead of two functions you write only one and use MOVAP, MULP, and ADDP macros in it. During the compilation, the real SSE or SSE2 instructions are substituted for these macros:

macro MOVAP a, b {

if ssewidth = 4

MOVAPS a, b

else

MOVAPD a, b

end if

}

macro ADDP a, b {

if ssewidth = 4

ADDPS a, b

else

ADDPD a, b

end if

}

macro MULP a, b {

if ssewidth = 4

MULPS a, b

else

MULPD a, b

end if

}

Don't hurry to write this code! A much more elegant solution exists:

macro defineinstr instr, [args] {

common

if ssewidth = 4

instr#S args

else

instr#D args

end if

}

macro MOVAP a, b {defineinstr MOVAP, a, b}

macro ADDP a, b {defineinstr ADDP, a, b}

macro MULP a, b {defineinstr MULP, a, b}

The macro defineinstr takes a variable number of arguments. For example, it can be used for SHUFPS with three arguments and for ADDPS with two arguments. The macro issues the instruction with a S suffix if ssewidth = 4, or the same instruction with a D suffix otherwise. The # operator concatenates names; common means the instruction will be repeated only once.

You can make this code even shorter:

macro defineinstr instr {

macro instr [args] \{

\common

if ssewidth = 4

instr#S args

else

instr#D args

end if

\}

}

defineinstr MOVAP

defineinstr ADDP

defineinstr MULP

There are two nested macros here. The outer macro, defineinstr, defines the inner one, instr. Note that instr is also the argument of defineinstr, so when you write defineinstr MOVAP, a macro named MOVAP will be defined. This is exactly what I want. Curly brackets and the common directive need to be escaped with backslashes because of some strange parsing problems in the internals of FASM. Now the definition of a new SSE/SSE2 instruction is extremely short and easy: just write defineinstr and the name of the instruction. The number of arguments doesn't matter.

I hope you are convinced now that FASM macros are very powerful and graceful. If not, just try to make a C preprocessor macro with variable number of arguments and compare it with the FASM equivalent!

Blitting Bitmaps with GDI and DirectDraw

The SetPixel function seems to be the slowest possible way to output the pixels to the screen:

case WM_PAINT: {

PAINTSTRUCT ps;

HDC hdc = BeginPaint(hWnd, &ps);

for(x = 0; x < w; ++x)

for(y = 0; y < h; ++y)

SetPixel(hdc, x,y, b[i]);

EndPaint(hWnd, &ps);

}

Use this function only to set 1 or 2 pixels; it's too slow for large images. Here is a simplified version of my program which is much faster:

int w, h; HBITMAP hBMP = 0, holdBMP; HDC hmem;

BITMAPINFOHEADER bi = {sizeof(BITMAPINFOHEADER), 0, 0, 1, 32, BI_RGB};

void ReDraw() {

PaintJuliaSSE(b, ceil(w, 4), h);

SetDIBits(hmem, hBMP, 0, h, b,

(BITMAPINFO*)&bi, DIB_RGB_COLORS);

}

LRESULT CALLBACK WndProc(HWND hWnd,UINT msg,WPARAM wParam,LPARAM lParam) {

switch(msg) {

case WM_SIZE: {

HDC hdc = GetDC(hWnd);

if(hBMP) { DeleteObject(SelectObject(hmem, holdBMP)); DeleteDC(hmem); }

w = LOWORD(lParam), bi.biWidth = ceil(w, 4),

h = HIWORD(lParam), bi.biHeight = h;

bi.biSizeImage = bi.biWidth * h * 4;

b = (long*)realloc(b, bi.biSizeImage);

hBMP = CreateCompatibleBitmap(hdc, ceil(w, 4), h);

hmem = CreateCompatibleDC(hdc);

holdBMP = (HBITMAP)SelectObject(hmem, hBMP);

ReleaseDC(hWnd, hdc);

ReDraw();

return 0; }

case WM_PAINT: {

PAINTSTRUCT ps; HDC hdc = BeginPaint(hWnd, &ps);

BitBlt(hdc, 0, 0, w, h, hmem, 0, 0, SRCCOPY);

DrawPolyline(hdc);

EndPaint(hWnd, &ps);

return 0; }

case WM_DESTROY:

DeleteObject(SelectObject(hmem, holdBMP)); DeleteDC(hmem);

PostQuitMessage(0); return 0;

default:

return DefWindowProc(hWnd,msg,wParam,lParam);

}

}

You call the ReDraw function every time the image is fully updated, e.g., zoomed in or out. It uses PaintJuliaSSE to generate the bitmap of the fractal in b. Then the SetDIBits function is called. It copies the whole b array to the hmem memory DC.

In response to WM_SIZE, the program sets a new size of the bitmap and recreates it. Also, the memory for the bitmap should be reallocated, and the fractal should be fully redrawn. The handler for WM_PAINT just blits (copies) the memory DC to the screen and adds a polyline that shows the orbit of the function.

The image of the fractal is stored in the memory DC, which exists during the whole lifetime of the program. Having this image, you can quickly draw the moving polyline by blitting the stored image and drawing the new position of the polyline above. This will cause a little flicker of the polyline, but it's tolerable. Adding the second memory DC and the second bitmap could remove the flicker completely, but then the program will be slow and memory-intensive.

SSE instructions operate on 4 values in parallel, and all SSE methods shown above require the number of pixels in the line to be divisible by 4. That's why the width (w) is rounded up with the ceil(w) function. The bitmap becomes a little larger than needed, and in the WM_PAINT handler, you blit only the w * h rectangle from it.

Besides GDI, the program also supports DirectDraw. I won't show the code here, because it's very long and boring (error handling takes a lot of space). In essence, it is very close to the GDI code. An off-screen surface is created; the fractal is rendered into it. On WM_PAINT, you blit the off-screen surface to the primary surface (to the screen) and draw the polyline. Modern video cards store both surfaces in the video memory, thus blitting with DirectDraw can be 50 times faster than GDI blitting (I get this result on my laptop with a simple on-board video chip, Intel 915 GM). DirectDraw improves the overall speed by 5-6% in comparison with GDI.

DirectDraw is supported for Windows 98 and higher. For the fans of Windows 95, I wrote a few lines of code so the program will gracefully degrade to GDI if DirectDraw is not available :).

Conclusion

My program is a very fast Mandelbrot and Julia set generator using SSE/SSE2 and DirectDraw. I will not claim it's "the fastest fractal generator for Win32", as the author of FFFF did, but you see it's pretty fast and easy-to-use. Some points still need more work, for example:

- there are faster algorithms which "guess" the values of the neighboring pixels;

- you can further optimize MOV-ADD and Vodnaya methods to get the maximum performance on 64-bit processors;

- may be, some tricky approach to optimizing branch prediction exists, but I didn't find it;

- the program can't save the current position and the C parameter to let you return to them in future (the next version will do this);

- changing the palette is not supported, but it was not my goal.

So the code is good, but not perfect. Please add your comments and suggestions on how to improve it.

You can use the techniques presented in this article in many different graphic applications. The analysis of latencies and throughputs helps you to find bottlenecks. Then you can exclude long-latency operations from the critical path, make several independent chains in your code, and increase instruction-level parallelism by reordering the instructions. Finally, the assembler macros free you from such dull work as rewriting the same code for SSE2.

Credits and Thanks

Thanks to Daniele Paccaloni, the author of FFFF. Viewing the source of his program helped me to find suboptimal solutions in my own code and, generally, to make my program better. Thank you, Daniele!

Thanks to my teacher, Tatyana Valenteenovna Korablina, for providing useful information about fractals and non-linear dynamics. I also feel grateful to people who helped me with testing: Anton Orlov, Larisa Alekseevna Morozova, and Victor "vito". Special thanks to Stas, who stayed at work on that Friday evening and let me use a lot of computers for tests.

General

General  News

News  Suggestion

Suggestion  Question

Question  Bug

Bug  Answer

Answer  Joke

Joke  Praise

Praise  Rant

Rant  Admin

Admin

That said, I found this article to be very well written and interesting. I'm impressed with your command of English, your general writing skill, and of course, the depth and clarity of what you had to say. Thank you for contributing this to the community.

That said, I found this article to be very well written and interesting. I'm impressed with your command of English, your general writing skill, and of course, the depth and clarity of what you had to say. Thank you for contributing this to the community.