|

Blue Iris latest stable version.

Coral small

YOLOv5 .NET medium

In ver. 2.6.2, with the Coral I tried changing models, and changing to medium from small.

This just resulted in apparent crashes of the module, and a lot of difficulty getting results to Blue Iris. Also, during that period, trying to make those changes, I kept losing the TPU Multi TPU setting. I have uninstalled and reinstalled CPAI 2.6.2 with no good results, and also uninstalled and reinstalled the Coral version 2.2.2 module with Do not use download cache checked.

I may be imagining things, but I thought I saw a period of good results with the Coral ver 2.2.0 module, but I can't seem to get back to it.

For me, the Yolo modules with ipcam-combined and ipcam-dark work pretty well.

I suppose I should download the current code and see if I can figure out what increments the failed inferences counter. It must be in there somewhere.

In some discussions with @mailseth, he asked if I see anything in the logs. I looked, but replied that nothing jumps out at me. Of course, I don't know what I'm looking for.

|

|

|

|

|

Coral on Windows is just not great. There's no getting around it I'm sorry. Failed inferences could be anything from the device overheating and shutting down, to a memory issue, to the USB driver failing. I constantly have issues on Windows with Coral, far less so on Linux (or even macOS 11).

cheers

Chris Maunder

|

|

|

|

|

The Coral accelerator in the Windows Blue Iris Code Project machine is an M.2, not USB.

My impression is that although it is very fast, accuracy seems poor, and lack of any custom models, it finds everything, even if it's wrong.

My Coral USB device on the Ubuntu machine has been flawless with Frigate NVR for about 5 months. It is the only detector specified, so I know it is working.

My big gripe with Coral is that the test program that you are supposed to use according to the installation web page will not run without an older version of py and pycoral. (I think. I haven't tried it for several months.) I like to be able to use the manufacturer's diagnostics to verify functionality.

|

|

|

|

|

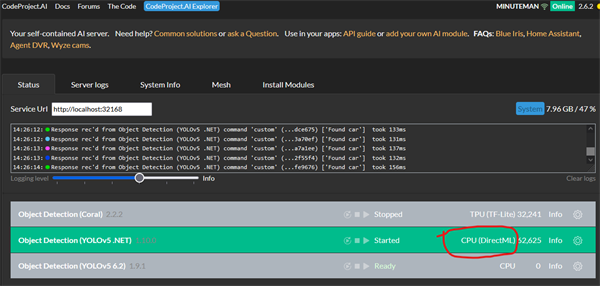

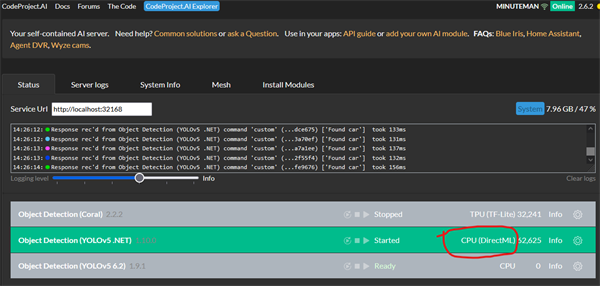

I am seeing the YOLOv5 .NET module displaying CPU (DirectML) despite the module info screen showing that GPU is enabled. Did I read somewhere that there is something wrong in the code that displays CPU instead of GPU on the status screen? I don't remember where I heard this.

|

|

|

|

|

Thanks very much for your report. Could you please share your System Info tab from your CodeProject.AI Server dashboard?

Thanks,

Sean Ewington

CodeProject

|

|

|

|

|

Server version: 2.6.2

System: Windows

Operating System: Windows (Microsoft Windows 10.0.19045)

CPUs: Intel(R) Core(TM) i5-7500 CPU @ 3.40GHz (Intel)

1 CPU x 4 cores. 4 logical processors (x64)

GPU (Primary): Intel(R) HD Graphics 630 (1,024 MiB) (Intel Corporation)

Driver: 31.0.101.2111

System RAM: 16 GiB

Platform: Windows

BuildConfig: Release

Execution Env: Native

Runtime Env: Production

Runtimes installed:

.NET runtime: 7.0.5

.NET SDK: Not found

Default Python: Not found

Go: Not found

NodeJS: Not found

Rust: Not found

Video adapter info:

Intel(R) HD Graphics 630:

Driver Version 31.0.101.2111

Video Processor Intel(R) HD Graphics Family

System GPU info:

GPU 3D Usage 0%

GPU RAM Usage 0

Global Environment variables:

CPAI_APPROOTPATH = <root>

CPAI_PORT = 32168

|

|

|

|

|

Thanks very much for that. And are you able to confirm that the GPU is actually being used for that module, but on the CodeProject.AI Server dashboard, CPU is displayed?

Thanks,

Sean Ewington

CodeProject

|

|

|

|

|

I'm experiencing the exact same issue:

Server version: 2.6.2

System: Windows

Operating System: Windows (Microsoft Windows 10.0.19045)

CPUs: Intel(R) Core(TM) i5-8259U CPU @ 2.30GHz (Intel)

1 CPU x 4 cores. 8 logical processors (x64)

GPU (Primary): Intel(R) Iris(R) Plus Graphics 655 (1,024 MiB) (Intel Corporation)

Driver: 31.0.101.2127

System RAM: 16 GiB

Platform: Windows

BuildConfig: Release

Execution Env: Native

Runtime Env: Production

Runtimes installed:

.NET runtime: 7.0.7

.NET SDK: Not found

Default Python: 3.10.7

Go: Not found

NodeJS: 12.16.3

Rust: Not found

Video adapter info:

Intel(R) Iris(R) Plus Graphics 655:

Driver Version 31.0.101.2127

Video Processor Intel(R) Iris(R) Plus Graphics Family

System GPU info:

GPU 3D Usage 0%

GPU RAM Usage 0

Global Environment variables:

CPAI_APPROOTPATH = <root>

CPAI_PORT = 32168

|

|

|

|

|

Thanks very much for that. And are you able to confirm that the GPU is actually being used for that module, but on the CodeProject.AI Server dashboard, CPU is displayed?

Thanks,

Sean Ewington

CodeProject

|

|

|

|

|

I haven't been back to the machine yet, but the system info screen shows GPU usage=0.

Looked at the performance monitor just now, that shows occasional 3d activity, but nothing else. 1% memory constant. GPU0

Maybe it's not using the GPU.

I again clicked enable GPU, log says GPU enabled when the module restarts.

Maybe uninstall reinstall the module? I've uninstalled and reinstalled ver. 2.6.2 a couple of times already trying to figure out the Coral failed inferences. I just don't know.

|

|

|

|

|

In my case, GPU is not used.

CPU (DirectML) is utilised despite selecting Enable GPU

|

|

|

|

|

This is a naming issue. Here's the comment for the EnableGPU property:

public bool? EnableGPU { get; set; } = true;

cheers

Chris Maunder

|

|

|

|

|

How can we force the use of GPU?

|

|

|

|

|

You can't. That module uses DirectML which means it will use the GPU if it can, meaning if the correct drivers are installed, and if the GPU is supported by DirectML. With DirectML, the use of the internal GPU is assumed, so if it's not actually using the GPU it's not something (AFAIK) we can really influence.

The only thing I would say is to be careful when trying to determine if the GPU is being used or not. A quote from an issue regarding DirectML using Tensorflow:

Quote: One thing to keep in mind is that if you're using Task Manager to monitor GPU usage, it can sometimes be misleading because the default Task Manager GPU usage graph looks for 3D workloads which are different from compute workloads like tensorflow-directml

cheers

Chris Maunder

|

|

|

|

|

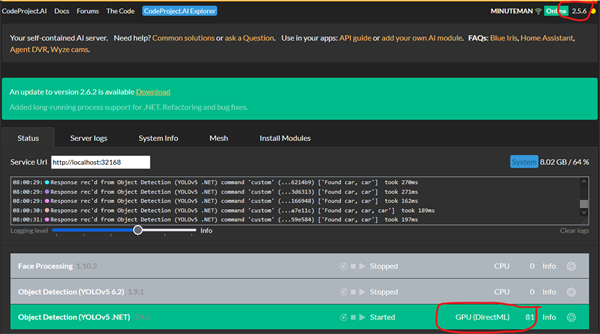

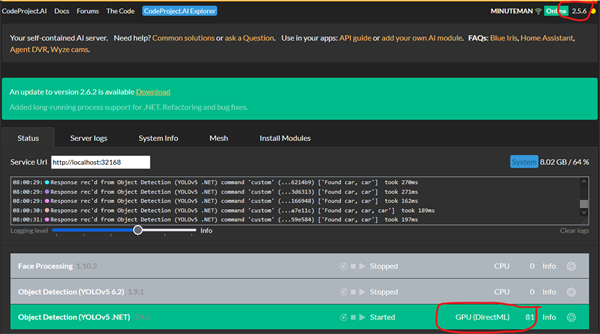

Interesting. I do recall that prior to upgrading to CPAI ver2.6.2 (using 2.5.6) the YoloV5.NET model displayed that it was utilising the GPU, however, it seems to utilise CPU with this upgrade. I haven’t rolled back to CPAI ver2.5.6 to confirm my recollection though.

|

|

|

|

|

Yes.

I'm going to try rolling back to 2.5.4.

|

|

|

|

|

Me too.

Rolled back to CPAI ver. 2.5.6. YOLOv5 .NET connected to GPU on startup, and shows it is using GPU (Intel 630) without any user intervention.

Server version: 2.5.6

System: Windows

Operating System: Windows (Microsoft Windows 10.0.19045)

CPUs: Intel(R) Core(TM) i5-7500 CPU @ 3.40GHz (Intel)

1 CPU x 4 cores. 4 logical processors (x64)

GPU (Primary): Intel(R) HD Graphics 630 (1,024 MiB) (Intel Corporation)

Driver: 31.0.101.2111

System RAM: 16 GiB

Platform: Windows

BuildConfig: Release

Execution Env: Native

Runtime Env: Production

Runtimes installed:

.NET runtime: 7.0.5

.NET SDK: Not found

Default Python: Not found

Go: Not found

NodeJS: Not found

Video adapter info:

Intel(R) HD Graphics 630:

Driver Version 31.0.101.2111

Video Processor Intel(R) HD Graphics Family

System GPU info:

GPU 3D Usage 10%

GPU RAM Usage 0

Global Environment variables:

CPAI_APPROOTPATH = <root>

CPAI_PORT = 32168

Module 'Object Detection (YOLOv5 .NET)' 1.9.3 (ID: ObjectDetectionYOLOv5Net)

Valid: True

Module Path: <root>\modules\ObjectDetectionYOLOv5Net

AutoStart: True

Queue: objectdetection_queue

Runtime: dotnet

Runtime Loc: Shared

FilePath: bin\ObjectDetectionYOLOv5Net.exe

Pre installed: False

Start pause: 1 sec

Parallelism: 0

LogVerbosity:

Platforms: all

GPU Libraries: installed if available

GPU Enabled: enabled

Accelerator:

Half Precis.: enable

Environment Variables

CUSTOM_MODELS_DIR = <root>\modules\ObjectDetectionYOLOv5Net\custom-models

MODELS_DIR = <root>\modules\ObjectDetectionYOLOv5Net\assets

MODEL_SIZE = MEDIUM

Status Data: {

"inferenceDevice": "GPU",

"inferenceLibrary": "DirectML",

"canUseGPU": true,

"successfulInferences": 179,

"failedInferences": 0,

"numInferences": 179,

"averageInferenceMs": 245,

"histogram": {

"person": 63,

"car": 262,

"bus": 19,

"truck": 2

},

"numItemsFound": 346

}

Started: 16 May 2024 7:52:55 AM Central Standard Time

LastSeen: 16 May 2024 8:07:00 AM Central Standard Time

Status: Started

Requests: 179 (includes status calls)

|

|

|

|

|

So it looks like its a CPAI ver2.6.2 issue then.

YoloV5 .NET GPU works successfully on CPAI ver2.5.6 but doesn't in CPAI ver2.6.2

|

|

|

|

|

This is what I was referring to.

https://www.codeproject.com/Messages/6002999/Re-stupid-me-Windows-11-upgrade

Thanks very much for the report. There is a bug with 2.6.2 where the dashboard displays CPU, but it is actually using GPU (which the DirectML indicates). So you should actually be using GPU. Unless you have some monitoring tools that indicate that's not happening, you might actually be OK here.

Thanks,

Sean Ewington

CodeProject

|

|

|

|

|

Just installed CPAI ver 2.6.5 this morning and can confirm that YOLOv5.NET is utilising and appears with GPU on the dashboard!

Thank you for your help with fixing this issue team!

|

|

|

|

|

i have a fresh install of code project 2.6.4 on ubuntu server 24.04, object detection installed fine on on the install but trying to install LPR and Training and i get a 404 error for both of them

Server version: 2.6.4

System: Linux

Operating System: Linux (Ubuntu 24.04)

CPUs: 12th Gen Intel(R) Core(TM) i7-12700K (Intel)

1 CPU x 12 cores. 20 logical processors (x64)

GPU (Primary): NVIDIA GeForce RTX 2060 (6 GiB) (NVIDIA)

Driver: 535.161.08, CUDA: 12.2 (up to: 12.2), Compute: 7.5, cuDNN:

System RAM: 31 GiB

Platform: Linux

BuildConfig: Release

Execution Env: Native

Runtime Env: Production

Runtimes installed:

.NET runtime: 7.0.18

.NET SDK: 7.0.118

Default Python: 3.12.3

Go: Not found

NodeJS: Not found

Rust: Not found

Video adapter info:

TU104 [GeForce RTX 2060] (rev a1):

Driver Version

Video Processor

System GPU info:

GPU 3D Usage 10%

GPU RAM Usage 1.9 GiB

Global Environment variables:

CPAI_APPROOTPATH = <root>

CPAI_PORT = 32168

|

|

|

|

|

Are you still getting 404s?

cheers

Chris Maunder

|

|

|

|

|

Upgraded to 2.6.2, Blue Iris intermittent AI Error 500 are back.

Can anyone explain the actual root cause of these errors? I'm getting a bit tired of the game between BI and CodeProject where updating one or the other has a 75% chance of re-introducing these errors.

Thanks

|

|

|

|

|

I'm sure you've reported stuff before but it's extremely difficult for us to remember everyone's details on everything.

Can you please let us know

- what version of the server you're using

- on which module you're seeing the error

- The actual error (screen shot would be good)

- Have you tested the image that's throwing the 500 using the CodeProject.AI Explorer. It uses the same API as Blue Iris

cheers

Chris Maunder

|

|

|

|

|

For sure. For reference I was on 2.5.4 w/ Blue Iris 5.8.8.12 and had no AI errors.

Updated to CodeProject 2.6.2. Intermittent errors started every 15-30min on random cameras.

Decided to upgrade BI to latest 5.9.0.5. Still exist.

I'm using Coral TPU, tried various models and sizes. Multi-TPU disabled.

I don't know what images it's failing on unfortunately; BI doesn't give me the optics into that.

09-05-24-1817 hosted at ImgBB — ImgBB[^] 09-05-24-1817 hosted at ImgBB — ImgBB[^]

|

|

|

|

General

General  News

News  Suggestion

Suggestion  Question

Question  Bug

Bug  Answer

Answer  Joke

Joke  Praise

Praise  Rant

Rant  Admin

Admin